CoderzColumn Tutorials

Learning is a lifelong process. But you must know what, where, and how to learn? What skills to develop? What skills will help you boost your career? If not, you are at the right place! Our tutorial section at CoderzColumn is dedicated to providing you with all the practical lessons. It will give you the experience to learn Python for different purposes and code on your own. Our tutorials cover:

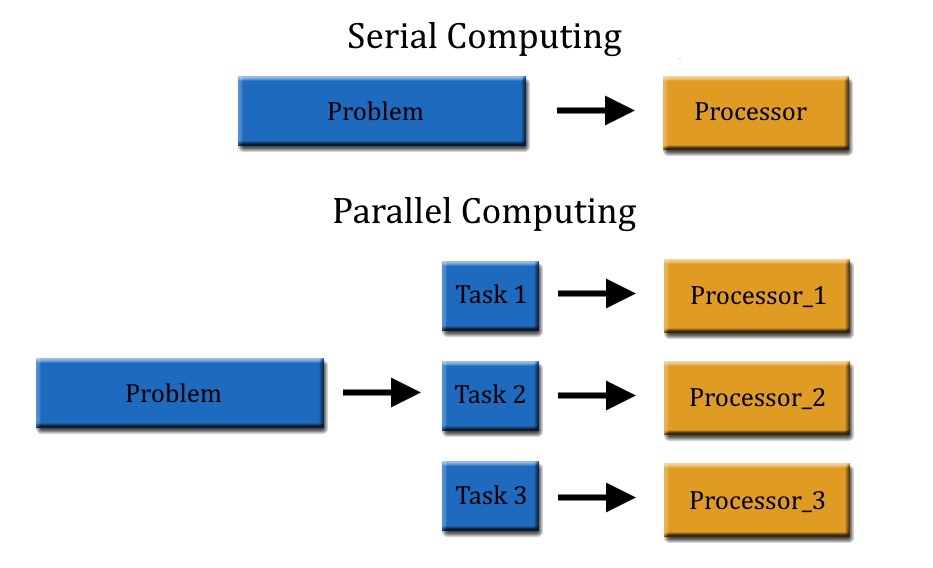

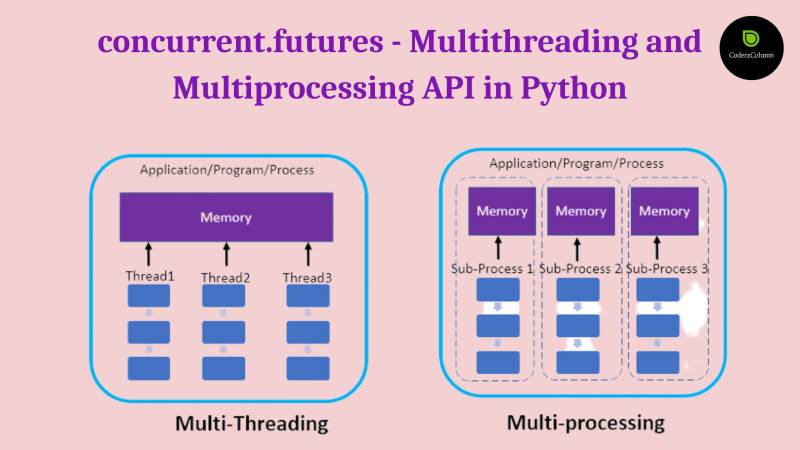

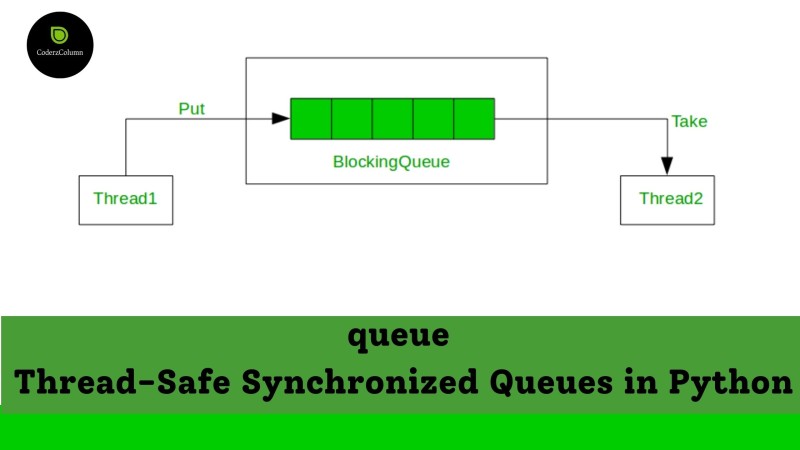

- Python Programming - threading, multiprocessing, concurrent.futures, asyncio, queue, imaplib, smtplib, email, mimetypes, cprofile, profile, tracemalloc, logging, ipywidgets, beautifulsoup, filecmp, glob, shutil, tarfile, zipfile, argparse, datetime, traceback, abc, contextlib, warnings, dataclasses, re, difflib, textwrap, collections, heapq, bisect, weakref, configparser, signa, ipaddress, xarray, pandas, subprocess, sched, etc.

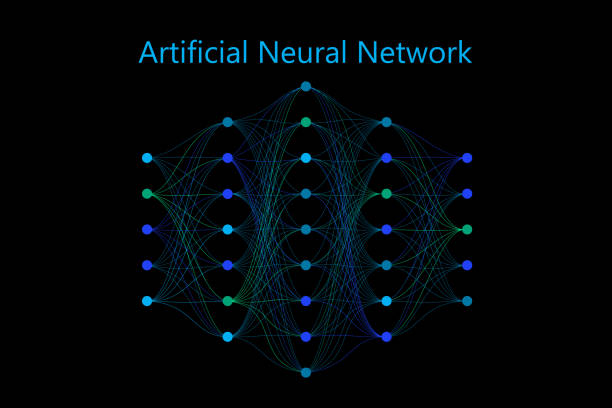

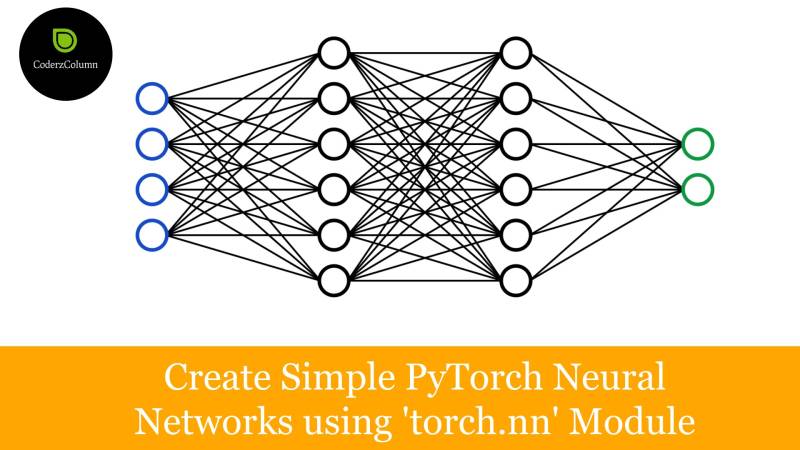

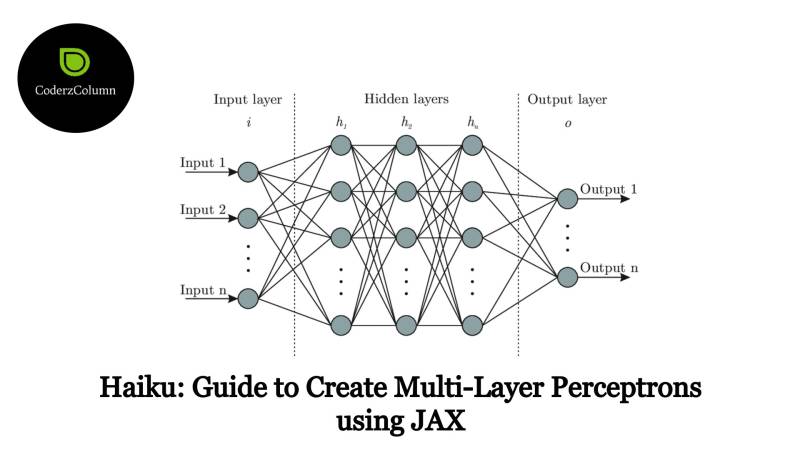

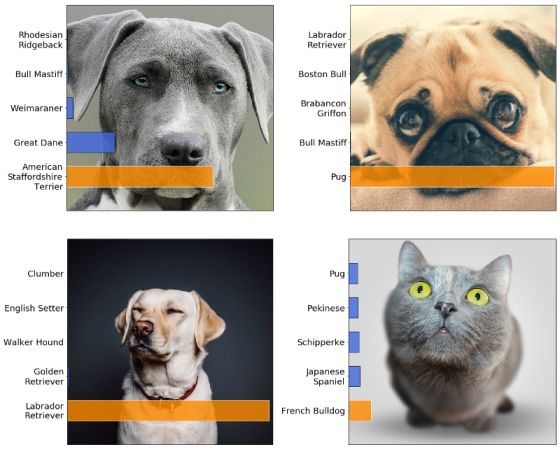

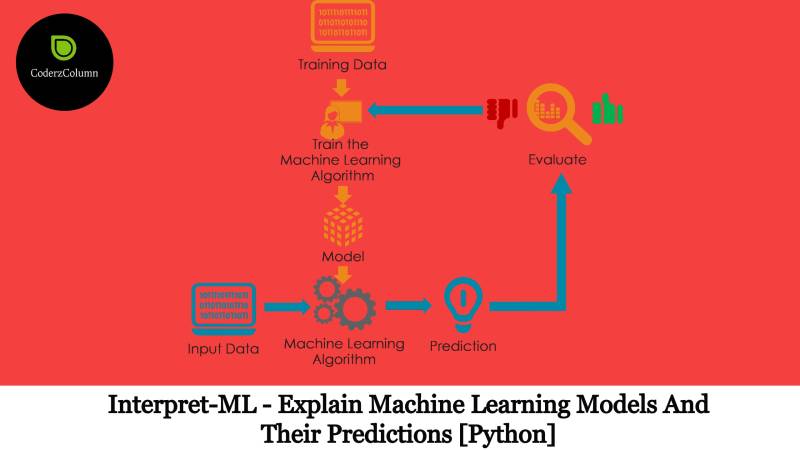

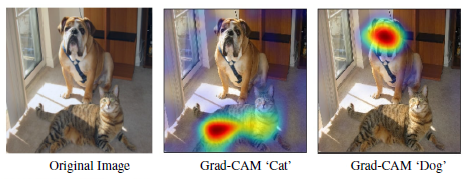

- Artificial Intelligence - PyTorch, Keras, Tensorflow, JAX, MXNet, Torchvision, Torchtext, GluonCV, GluonNLP, etc

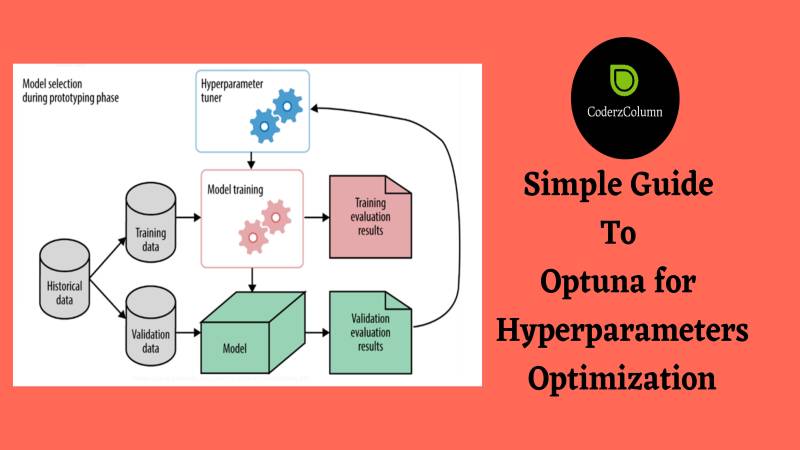

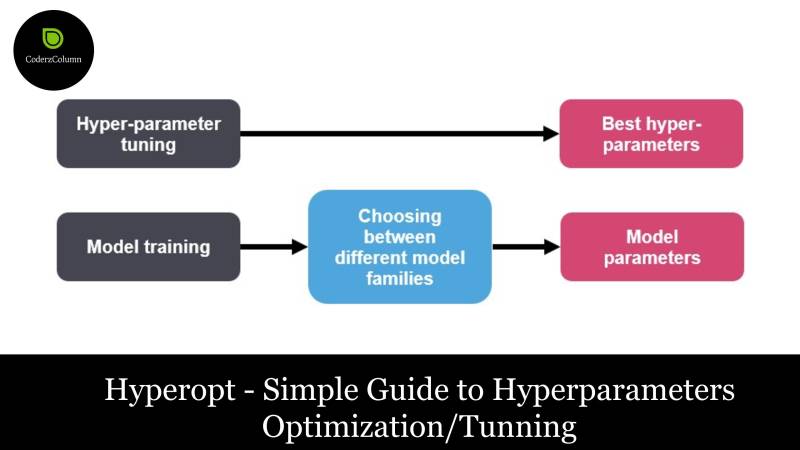

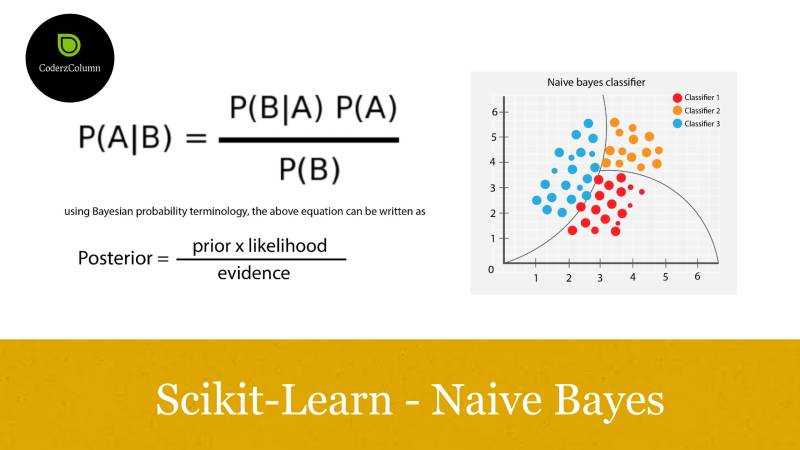

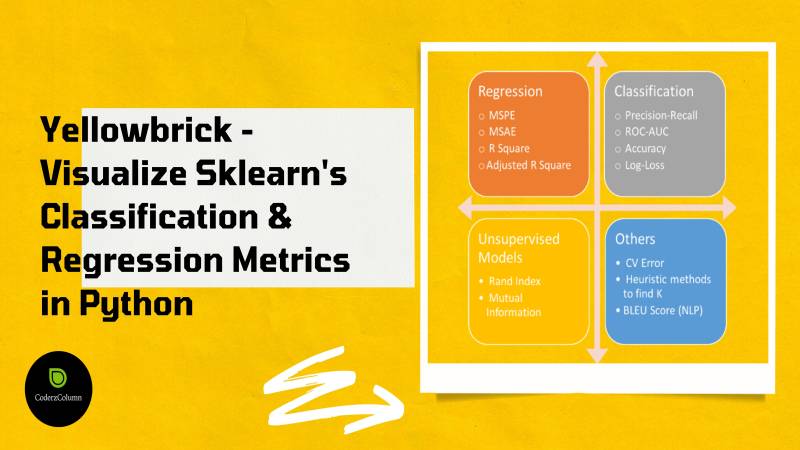

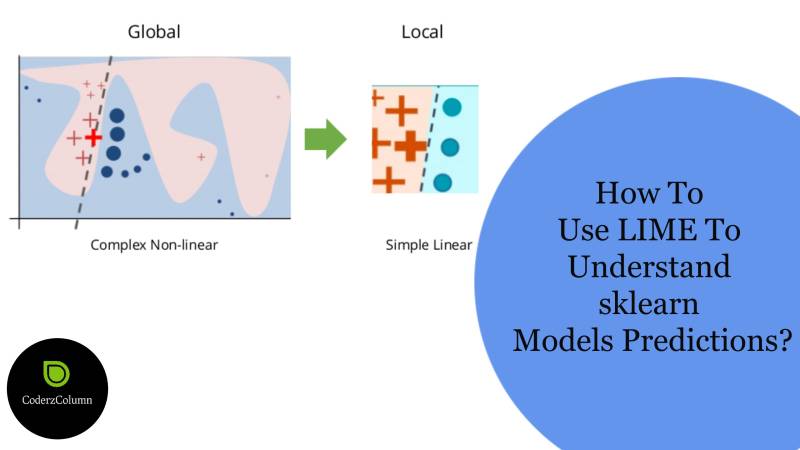

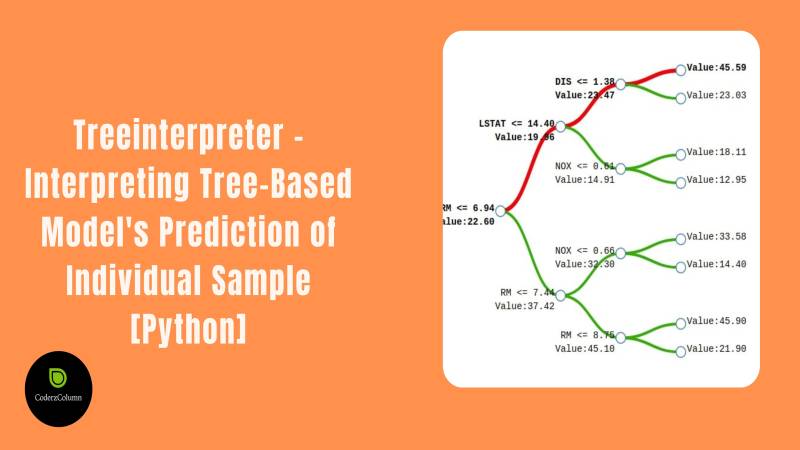

- Machine Learning - Scikit-Learn, Statsmodels, XGBoost, CatBoost, LightGBM, optuna, scikit-optimize, hyperopt, bayes_opt, scikit-plot, lime, shap, eli5, etc.

- Data Science - missingno, seaborn, pandas, sweetviz, numpy, networkx, xarray, awkward-array, etc.

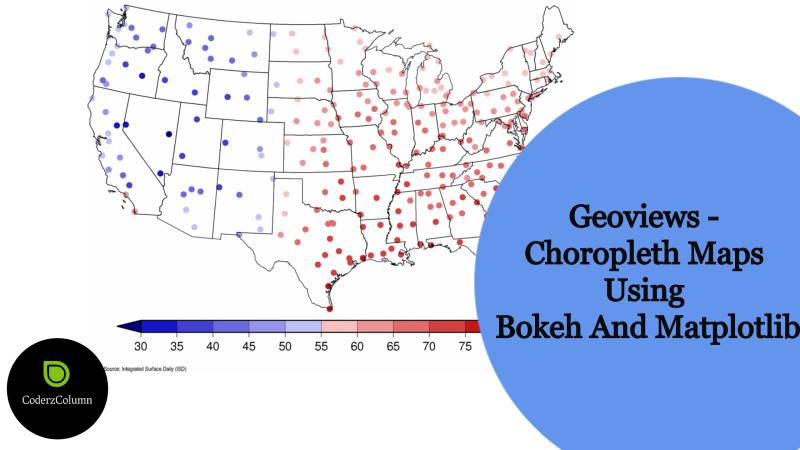

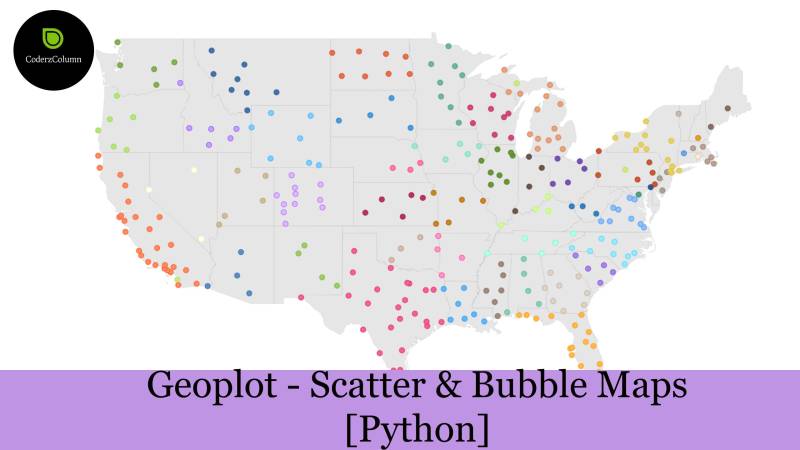

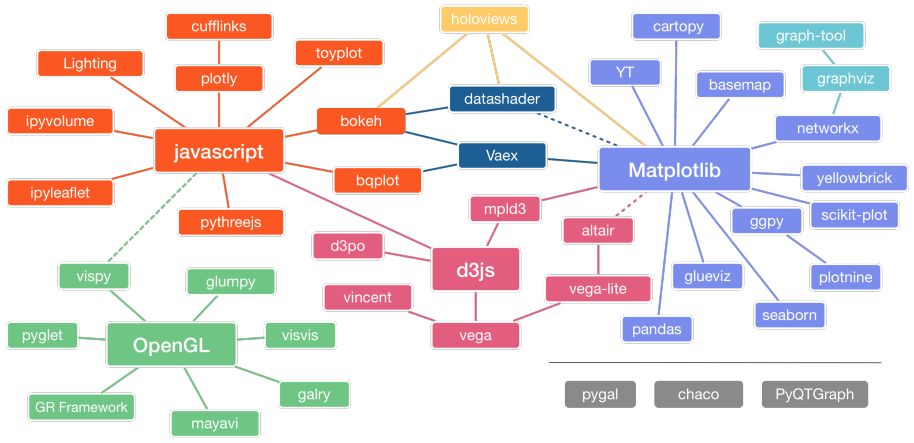

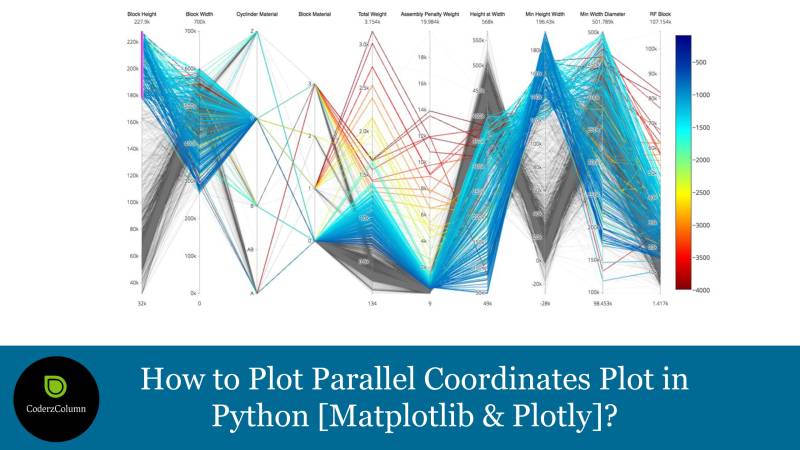

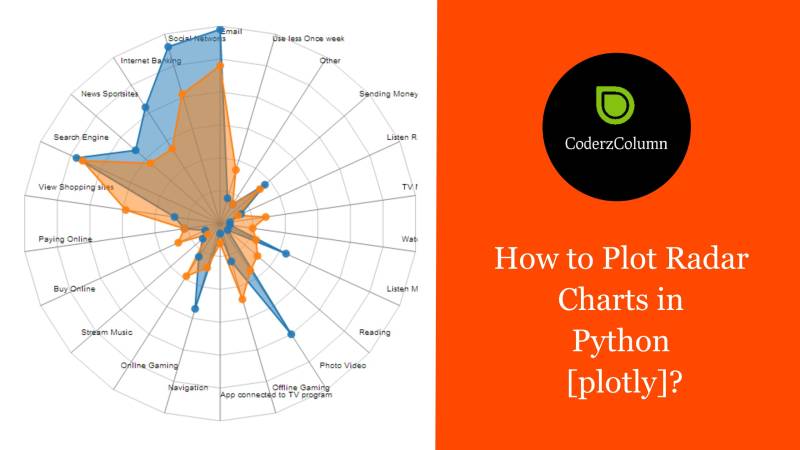

- Data Visualization - Matplotlib, Bokeh, Bqplot, Plotnine, Altair, Plotly, Cufflinks, Holoviews, dash, streamlit, panel, voila, bokeh, geopandas, geoviews, folium, ipyleaflet, geoplot, cartopy, etc.

- Digital Marketing - SEO tactics, marketing strategies, Social Media marketing, and more.

For an in-depth understanding of the above concepts, check out the sections below.

Sunny Solanki

Sunny Solanki

![How to Create Sunburst Chart / Diagram in Python [Plotly]?](https://storage.googleapis.com/coderzcolumn/static/tutorials/data_science/article_image/How%20to%20Create%20Sunburst%20Chart_Diagram%20in%20Python%20Plotly.jpg)

%20API%20to%20Understand%20Statistical%20Relations%20between%20Multiple%20Data%20Variables.jpg)

%20to%20Interactive.jpg)

.jpg)