How to Use eli5 to Interpret ML Models and their Predictions?¶

Scikit-learn is the go-to library for many machine learning practitioners around the world for quick construction of machine learning pipelines. It provides a very easy-to-use interface and has an implementation of many machine learning algorithms.

> Why Traditional ML Metrics are not Enough? Why Interpret Predictions of ML Models?¶

Even though scikit-learn provides an easy-to-use interface and implementation of all algorithms, we can't know the exact performance of our model only based on metrics like accuracy, r2 score, roc curve, precision-recall curve, etc. Because our model can have good accuracy but still fails to predict some target classes or can be using irrelevant features to make decisions. E.g., for cat vs dog task, model is using background pixels to identify an object in an image. It should use object pixels to identify it.

We need something more robust which can give us insight into why the model is performing a particular way for a particular example. We need a way to understand how different features are contributing to predictions to better understand whether our model has generalized well and is reliable.

> What Solutions Python Offers for Interpreting ML Models?¶

Python has a library called 'eli5' which can help us better understand trained models on why they make a particular prediction on a particular sample. This can help us better understand our model to know about important features as well as the reliability of the model.

Apart from "eli5", Python offers other libraries like lime, SHAP, captum, treeinterpreter, etc for interpreting predictions of ML Models.

> What Can You Learn From This Article?¶

As a part of this tutorial, we have covered how to use Python library 'Eli5' to explain/interpret ML Models and their predictions. Tutorial trains simple ML Models created using scikit-learn on small toy datasets and then tries to explain models as well as their predictions using "Eli5". It covers guide to use "Eli5" with structured data and unstructured data (text). For text data, it highlights parts of text (words, n-grams, characters, etc) to show their contributions to prediction. The usage of library is explained with both regression and classification tasks. We have tried to cover much of eli5's API as a part of this tutorial.

> List of Python ML Libraries Supported by "Eli5"¶

The eli5 has support for a list of below libraries it can explain though we'll be primarily concentrating on sklearn as a part of this tutorial.

- Scikit-learn

- XGBoost

- CatBoost

- Keras

- lightning

- LightGBM

- sklearn-crfsuite

The eli5 can also handle sklearn models which are the pipeline of estimators and can reverse encoding performed on data.

The eli5 also provides an implementation of LIME algorithm for estimators which are quite complicated, and eli5 does not have methods that can explain such black-box models. It even provides Grad-CAM algorithm implementation for Keras image classifiers. Check References or end of first section to get links to tutorials that cover them.

> How to Install "Eli5"?¶

- Using pip

- pip install -U eli5

- Using conda

- conda install -c conda-forge eli5

Below, we have listed important sections of tutorial to give an overview of material covered.

Important Sections Of Tutorial¶

- What are Different Ways to Explain ML Models using "Eli5" (API Overview)?

- 1.1. Two Different Ways of Explaining ML Models

- 1.2. High-Level Eli5 API Overview

- 1.3. Other Useful Sub-Modules of "Eli5"

- Steps to Use "Eli5"

- Structured Data (Tabular) : Regression

- Linear Regression

- Train ML Model and Evaluate ML Metrics

- Explain Predictions using "Eli5" ("show_*()" Methods)

- Explain ML Model (Global Features Importances)

- Explain Individual Predictions (Local Features Importances)

- Decision Tree Reggresor

- Train ML Model and Evaluate ML Metrics

- Explain Predictions using "Eli5" ("show_*()" Methods)

- Decision Tree Reggresor

- Train ML Model and Evaluate ML Metrics

- Explain Predictions using "Eli5" ("explain_*()" Methods)

- Format Explanation as HTML

- Format Explanation as Text

- Format Explanation as Pandas DataFrame

- Format Explanation as Pandas Dictionary

- Linear Regression

- Structured Data (Tabular) : Classification

- Binary Classification: Logistic Regression

- Train ML Model and Evaluate ML Metrics

- Explain Predictions using "Eli5"

- MultiClass Classification: Logistic Regression

- MultiClass Classification: Decision Tree

- Binary Classification: Logistic Regression

- Unstructured Data (Text) : Classification

- Random Forest with Word-Level Tokenization

- Train ML Model and Evaluate ML Metrics

- Explain Predictions using "Eli5"

- Random Forest with Character-Level Tokenization

- Random Forest with Word-Level Tokenization

1. What are Different Ways to Explain ML Models using "Eli5" (API Overview)?¶

NOTE: Please feel free to skip this section if you are in hurry and want to get started with coding part. This is a theoretical overview of how to use a library and its API. You can skim through it as well

1.1. Two Different Ways of Explaining ML Models¶

At high-level, the eli5 provides two ways to understand ML models and their predictions:

- Global Features Importances (Model Level): It lets us analyze model weights to understand the global performance of the model.

- Local Features Importances (Individual Example Level): It lets us analyze individual data example's prediction to understand the local performance of the model. This can help us drill down why the particular prediction was made and which data features played what role in that prediction.

1.2. High-Level Eli5 API Overview¶

The Eli5 API is organized according to above-mentioned two ways of explaining ML Model predictions.

- "explain_*()" Methods: Returns Explanation Object.

- explain_weights() - It takes any ML Model as input and returns Explanation object which has global features importances. This object can be formatted in different ways to display features importances.

- "Eli5" provides many different formatting functions through "elif5.formatters" that let us format features importances present in Explanation object in different ways. This can be useful for individuals who want to format importances in different ways. Below, we have listed important ones.

- "format_as_html()" - Returns Explanation formatted as HTML.

- "format_html_styles()" - Returns Explanation formatted as HTML.

- "format_as_text()" - Returns Explanation formatted as Text explanation of model.

- "format_as_dict()" - Returns explanation formatted as dictionary.

- "format_as_dataframe()" - Returns explanation formatted as dataframe.

- "format_as_dataframes()" - - Returns explanation formatted as dataframes.

- "format_as_image()" - - Returns explanation formatted as image.

- Based on input model type it calls an underlying method to handle that model.

- "explain_weights_sklearn()"

- "explain_linear_regressor_weights()"

- "explain_linear_classifier_weights()"

- "explain_decision_tree()"

- "explain_rf_feature_importance()"

- "explain_permutation_importance()"

- "explain_weights_lightgbm()"

- "explain_prediction_xgboost()"

- "explain_weights_catboost()"

- "explain_weights_lightning()"

- "explain_weights_sklearn_crfsuite()"

- "Eli5" provides many different formatting functions through "elif5.formatters" that let us format features importances present in Explanation object in different ways. This can be useful for individuals who want to format importances in different ways. Below, we have listed important ones.

- explain_prediction() - It takes any ML Model and single data example as input and returns Explanation object which has local features importances explaining contributions of features for that individual example prediction. We can format this explanation object as per our requirements using method mentioned in previous point.

- Based on input model type it calls an underlying method to handle that model.

- "explain_prediction_sklearn()"

- "explain_prediction_linear_regressor()"

- "explain_prediction_linear_classifier()"

- "explain_prediction_tree_classifier()"

- "explain_prediction_tree_regressor()"

- "explain_prediction_lightning()"

- "explain_prediction_lightgbm()"

- "explain_prediction_xgboost()"

- Based on input model type it calls an underlying method to handle that model.

- explain_weights() - It takes any ML Model as input and returns Explanation object which has global features importances. This object can be formatted in different ways to display features importances.

- "show_*()" Methods: Returns IPython.display.HTML Object.

- show_weights() - This method works exactly like "explain_weights()" with only difference being that it returns an IPython.display.HTML object instead. We can display this object in Jupyter notebook to show global weights.

- The usage of this method is same as calling explain_weights() to generate Explanation object and then calling format_as_html() with explanation object to display it.

- show_prediction() - This method works exactly like "explain_prediction()" with only difference being that it returns an IPython.display.HTML object instead. We can display this object in Jupyter notebook to show local features importances.

- The usage of this method is same as calling explain_prediction() to generate Explanation object and then calling format_as_html() with explanation object to display it.

- show_weights() - This method works exactly like "explain_weights()" with only difference being that it returns an IPython.display.HTML object instead. We can display this object in Jupyter notebook to show global weights.

Important Suggestion

We recommend that you use high-level API of "Eli5" to explain your ML Model and its predictions. It'll call appropriate underlying method based on ML Model type. You should use one of these 4 methods.

- show_weights()

- show_prediction()

- explain_weights()

- explain_prediction()

1.3. Other Useful Sub-Modules of "Eli5"¶

Apart from above mentioned methods, "Eli5" provides an implementation of two famous ML Model prediction interpretation algorithms.

- LIME (Local Interpretable Model-Agnostic Explanations) - Eli5 provide implementation of LIME algorithm through "eli5.lime" module. It currently supports explaining predictions of text classification networks only.

- Grad-CAM (Gradient-weighted Class Activation Mapping) - Eli5 provides implementation of Grad-CAM through "eli5.keras" module. It currently supports explaining predictions of image classification networks using Grad-CAM.

We have not covered these modules in this tutorial. Please feel free to check below links if you are interested in them.

How to Use LIME Algorithm Implementation Available from Eli5 for Text Classification?¶

How to Use Keras Grad-CAM Implementation available from Eli5 for Image Classification?¶

NOTE

Please make a NOTE that "Eli5" supports Keras version 2.3.1 or earlier. If while importing "eli5", you face error related to "protobuf" then install lower version of it using below pip command.

- pip install protobuf==3.20.*

2. Steps to Use "Eli5" ¶

2.1 Steps to Use "Eli5" to Explain ML Models¶

- Train ML Model

- Call "show_weights()" function of "eli5" with ML Model as first parameter.

- Output of function is IPython.display.HTML object which can be displayed in Notebook.

------------------ OR ------------------

- Train ML Model

- Call "explain_weights()" function of "eli5" with ML Model as first parameter.

- Output of function is Explanation object.

- Format "Explanation" object using one of "Eli5" formatters.

- "format_as_html()"

- "format_as_df()"

- "format_as_dict()"

- "format_as_text()"

2.2 Steps to Use "Eli5" to Explain Predictions Of ML Models¶

- Train ML Model

- Call "show_prediction()" function of "eli5" with ML Model as first parameter and individual data example as second parameter.

- Output of function is IPython.display.HTML object which can be displayed in Notebook.

------------------ OR ------------------

- Train ML Model

- Call "explain_prediction()" function of "eli5" with ML Model as first parameter and individual data example as second parameter.

- Output of function is Explanation object.

- Format "Explanation" object using one of "Eli5" formatters.

- "format_as_html()"

- "format_as_df()"

- "format_as_dict()"

- "format_as_text()"

NOTE

Please make a NOTE that "display" sub-module IPython library provides various functions that let us display rich contents like audio, video, image, HTML, Javascript, markdown, Latex, etc in Jupyter Notebooks. Please feel free to check below link if you are interested in it.

3. Structured Data (Tabular) : Regression Tasks ¶

First, we'll use eli5 charts for explaining machine learning models which are used for regression tasks and structured data.

Below, we have imported essential Python libraries that we'll use for our tutorial.

import eli5

print("Eli5 Version : {}".format(eli5.__version__))

import pandas as pd

import numpy as np

import sklearn

print("Scikit-Learn Version: {}".format(sklearn.__version__))

import warnings

warnings.filterwarnings("ignore")

3.1. Linear Regression¶

As a part of our first example, we'll be using the Boston housing dataset available from scikit-learn. The dataset has information about various housing attributes and a target to predict is the median value of homes in 1000s dollars. The dataset is easily available from the scikit-learn library.

If you want to learn about linear regression ML model available from scikit-learn then please feel free to check below link.

3.1.1 Load Dataset¶

We have below the printed dataset description explaining various feature meanings and have also loaded the dataset as a pandas dataframe.

from sklearn.datasets import load_boston

boston = load_boston()

for line in boston.DESCR.split("\n")[5:27]:

print(line)

boston_df = pd.DataFrame(data=boston.data, columns = boston.feature_names)

boston_df["Price"] = boston.target

boston_df.head()

3.1.2 Divide Dataset into Train and Test Sets¶

We'll now divide dataset into train(90%) and test(10%) sets using train_test_split() method of scikit-learn.

from sklearn.model_selection import train_test_split

X, Y = boston.data, boston.target

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, train_size=0.90, test_size=0.1, random_state=123, shuffle=True)

X_train.shape, X_test.shape, Y_train.shape, Y_test.shape

3.1.3 Train Model and Calculate R2 Score¶

Below we have fitted a simple linear regression model to train data and then printed the r2 score of the model on both test and train data. If you are interested in learning about various machine learning metrics available from sklearn then please feel free to check our tutorial on the same.

from sklearn.linear_model import LinearRegression

lr = LinearRegression()

lr.fit(X_train, Y_train)

print("Test R^2 Score : ", lr.score(X_test, Y_test))

print("Train R^2 Score : ", lr.score(X_train, Y_train))

3.1.4 Explain ML Model and Predictions using "Eli5" ("show_*()" Methods)¶

The eli5 provides two common methods that can be used across different models.

- show_weights() - It displays estimator's global weights as HTML table, text, etc. These are generally the same as coef_ and featureimportances available with linear and tree or ensemble sklearn estimators.

- show_prediction() - It displays estimator's performance on particular sample as HTML table, tree, text, etc.

1. Explain ML Model using "show_weights()" (Global Features Importances)¶

The first method that we'll explore using eli5 is show_weights(). Below we have used show weights to show feature importance for the linear regression model which we trained in the previous cell.

from eli5 import show_weights

show_weights(lr, feature_names=boston.feature_names)

Important Parameters of "show_weights()"¶

Below we have given a list of important parameters of the show_weights() method which can help us modify visualizations generated by the method.

- feature_names: This parameter accepts a list of string specifying feature names.

- top: This parameter accepts int or (pos:int, neg:int) tuple. If we specify only a single integer then many top features from the model will be included in the figure. If we specify (pos: int, neg: int) tuple then many positive and negative features will be displayed.

- target_names: This parameter accepts a list of a string or dictionary with mapping from old target names to new target names.

- target: This parameter accepts a list of target names for which we want to see weights to be displayed. This can be useful if our classification task has many classes and we want to see the weights of a few of them only.

- feature_re: This parameter accepts string specifying regular expression which lets us select feature names that satisfy the criteria of that regular expression.

- feature_filter: This parameter has the same usage as that of feature_re and lets us specify callable which will accept the future name and return True/False. Then based on that True/False parameter will be selected/rejected to be displayed.

- show: This parameter accepts a list of string values specifying which sections of explanation to show. Below is a list of values available. Please make a note that not all sections will be available with all models. E.g : decision_tree won't be available with LinearRegression.

- targets - Shows feature weights for target class

- transition_features - Shows transition feature of a CRF model.

- feature_importances - Shows feature importances of the decision tree, random forest, etc.

- decision_tree - Shows decision tree.

- method - A string explaining the method.

- description - Text explaining method used by the model and its caveats.

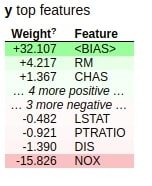

Below we have again used show_weights() to show model weights as a table but this time we are only displaying the top 7 important features.

show_weights(lr, feature_names=boston.feature_names, top=7)

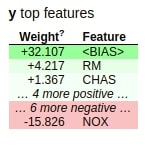

Below we are again displaying model weights as an HTML table with the top 3 positive features and 1 negative feature in the table.

show_weights(lr, feature_names=boston.feature_names, top=(3,1))

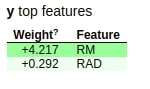

Below we are displaying model weights for the only feature whose name starts with the letter 'R'.

show_weights(lr, feature_names=boston.feature_names, top=5, feature_re=r"^R")

Below we have displayed model weights again but with the only feature whose name is ending with the character 'T'.

show_weights(lr, feature_names=boston.feature_names, top=5, feature_filter=lambda fet : fet.endswith("T"))

Below we have used the show_weights() method to show which method was used by the model for prediction as well as a description explaining how the model works and its caveats.

show_weights(lr, feature_names=boston.feature_names, show=["method", "description"])

2. Explain Individual Predictions using "show_prediction()" (Local Features Importances)¶

The second important method that eli5 provides is show_prediction() which can be used to show how individual feature is contributing when making a prediction for a particular sample. This can give us more insights into how the model is performing for a particular sample of data.

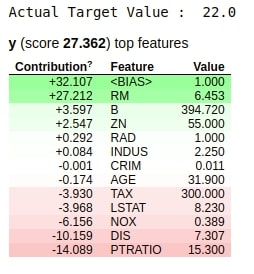

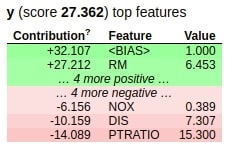

Below we are taking a random sample from test data and then plotting how individual feature is contributing to predicting output for this random sample along with feature values as an HTML table.

from eli5 import show_prediction

import random

rand = random.randint(1, len(X_test))

print("Actual Target Value : ", Y_test[rand])

show_prediction(lr, X_test[rand], feature_names=boston.feature_names, show_feature_values=True)

The above table starts with the BIAS value and then adds all feature values one by one to it to generate a final prediction.

Important Parameters of "show_prediction()"¶

The show_prediction() method has all parameters almost same as that of show_weights() with few extra parameters. We have below highlighted all parameters with the description given for new parameters only.

- top: It has same meaning as in show_weights().

- top_targets: It has same meaning as in show_weights().

- target_names: It has same meaning as in show_weights().

- targets: It has same meaning as in show_weights().

- feature_names: It has same meaning as in show_weights().

- feature_re: It has same meaning as in show_weights().

- feature_filter: It has same meaning as in show_weights().

- show: It has same meaning as in show_weights().

- horizontal_layout: It accepts bool value. If set to True then feature weights tables for classification tasks are laid out horizontally else vertically.

- highlight_spaces: It accepts bool value specifying whether to highlight space between features when highlighting text data or not.

- force_weights: It accepts bool value specifying whether to show weights table if features are already highlighted in the text representation.

- preserve_density: It accepts bool value specifying intensities of the color of text sample when working with text data.

- show_feature_values: It accepts bool value specifying whether feature values should be shown in along with weights in a table or not.

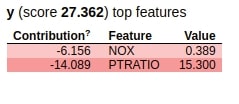

Below we are again plotting results from show_prediction() with only showing the top 5 features in the resulting table.

show_prediction(lr, X_test[rand], feature_names=boston.feature_names, show_feature_values=True, top=5)

Below we are plotting results from show_prediction() with only features which has the letter O contained in it.

show_prediction(lr, X_test[rand], feature_names=boston.feature_names, show_feature_values=True, feature_re="O")

3.2 Decision Tree ¶

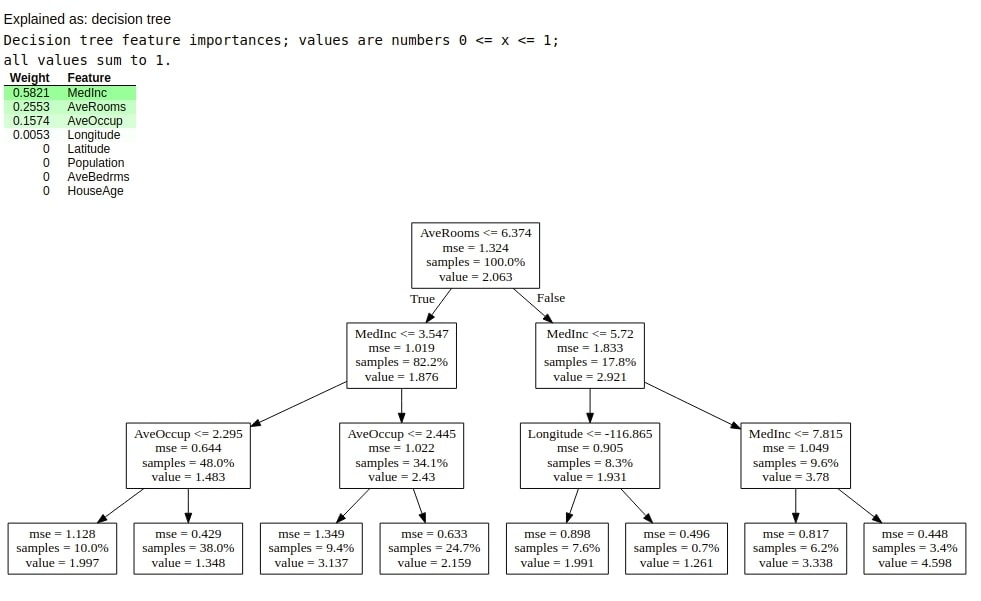

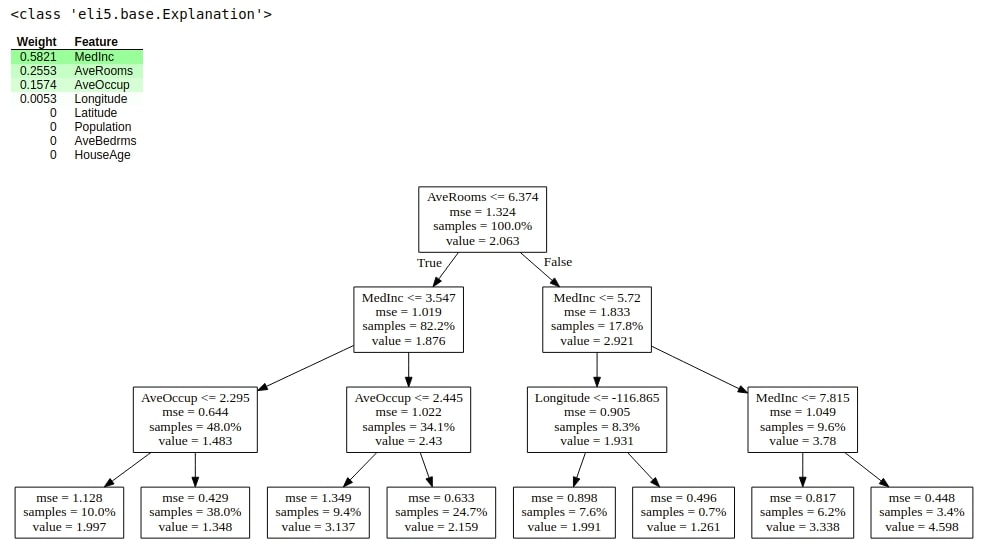

As a part of 2nd example, we'll be using the California housing dataset available from scikit-learn.

Please make a NOTE that we have created separate examples for decision tree because for decision tree "eli5" creates a tree-like visualization. For all other models, it's a simple table.

If you want to learn about decision tree ML model available from scikit-learn then please feel free to check below link.

3.2.1 Load Data, Train Model and Calculate ML Metrics¶

Below, we have loaded dataset from sklearn and plotted its description explaining the meaning of various features available in the dataset.

We have then divided data into train/test sets, fitted model on train data, and evaluated it on test data. We have printed the r2 score of the model on both train and test sets.

We'll be explaining tree estimators using the show_weights() and show_prediction() method as a part of this example.

from sklearn.datasets import fetch_california_housing

from sklearn.tree import DecisionTreeRegressor

calif_housing = fetch_california_housing()

for line in calif_housing.DESCR.split("\n")[5:21]:

print(line)

X, Y = calif_housing.data, calif_housing.target

print("\nData Size : ", X.shape, Y.shape)

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, train_size=0.90, test_size=0.1, random_state=123)

print("Train/Test Split : ", X_train.shape, X_test.shape, Y_train.shape, Y_test.shape)

dtree = DecisionTreeRegressor(max_depth=4, max_leaf_nodes=250, max_features="log2")

dtree.fit(X_train, Y_train)

print("Test R^2 Score : %.2f"%dtree.score(X_test, Y_test))

print("Train R^2 Score : %.2f"%dtree.score(X_train, Y_train))

3.2.2 Explain ML Model and Predictions using "Eli5" ("show_*()" Methods)¶

1. Explain ML Model using "show_weights()" (Global Features Importances)¶

Below we have plotted feature importances for the model.

show_weights(dtree, feature_names=calif_housing.feature_names,

show=["feature_importances"])

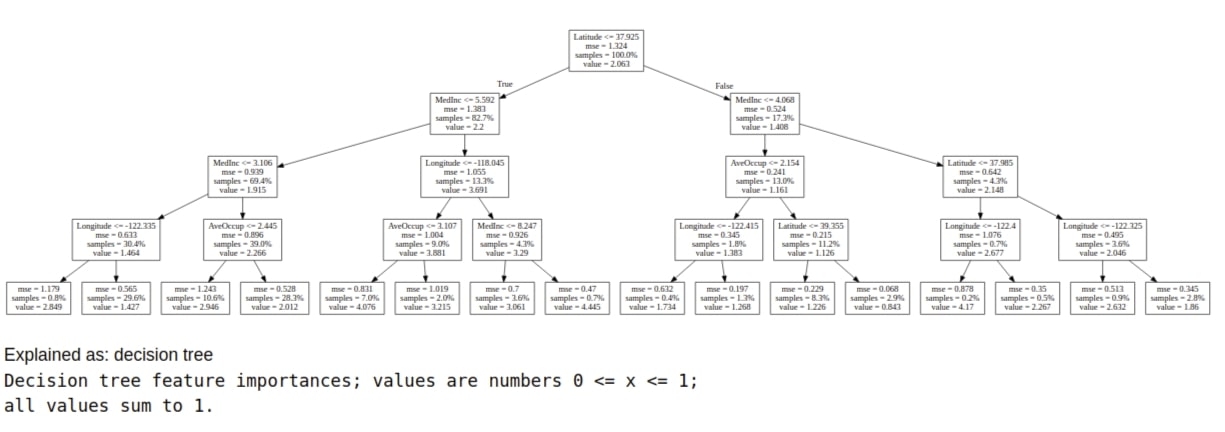

Below we have plotted a global decision tree, an algorithm used for prediction, and a description of an algorithm using the show_weights() method.

show_weights(dtree, feature_names=calif_housing.feature_names,

show=["decision_tree", "method", "description"])

2. Explain Individual Predictions using "show_prediction()" (Local Features Importances)¶

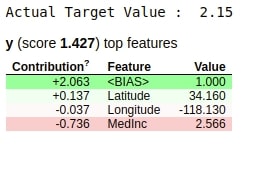

Below we have printed model performance on random test sample using the show_prediction() method.

rand = random.randint(1, len(X_test))

print("Actual Target Value : ", Y_test[rand])

show_prediction(dtree,

X_test[rand],

feature_names=calif_housing.feature_names,

show_feature_values=True,

)

3.3. Decision Tree ¶

3.3.1 Load Data, Train Model and Evaluate ML Metrics¶

As a part of our third example, we'll be using the same California housing dataset from the previous example. We have trained the decision tree on it as earlier. We'll be explaining how to create explanations from machine learning models and then format output from that explanations as a part of this example.

from sklearn.datasets import fetch_california_housing

from sklearn.tree import DecisionTreeRegressor

calif_housing = fetch_california_housing()

X, Y = calif_housing.data, calif_housing.target

print("Data Size : ", X.shape, Y.shape)

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, train_size=0.90, test_size=0.1, random_state=123)

print("Train/Test Split : ", X_train.shape, X_test.shape, Y_train.shape, Y_test.shape)

dtree = DecisionTreeRegressor(max_depth=3, max_leaf_nodes=250, max_features="log2")

dtree.fit(X_train, Y_train)

print("Test R^2 Score : %.2f"%dtree.score(X_test, Y_test))

print("Train R^2 Score : %.2f"%dtree.score(X_train, Y_train))

3.3.2 Explain ML Model and Predictions using "Eli5" ("explain_*()" Methods)¶

1. Explain ML Model using "explain_weights_sklearn()" (Global Features Importances)¶

The explain_weights_sklearn() method available as a part of the sklearn module of eli5 takes as input model used for training data and feature names as input and returns explanation object of type Explanation. We can pass this explanation object to different formatting methods available with eli5 to display explanations in different formats.

Below we have generated an explanation object from our decision tree regressor model.

from eli5.sklearn import explain_weights_sklearn

explanation = explain_weights_sklearn(dtree, feature_names=calif_housing.feature_names)

type(explanation)

Format Output as Pandas DataFrame using "format_as_dataframe()"¶

The format_as_dataframe() method takes as input explanation object and returns model weights as pandas dataframe.

from eli5.formatters import format_as_dataframe, format_as_dataframes

format_as_dataframe(explanation)

Format Output as Text using "format_as_text()"¶

The format_as_text() method takes as input explanation object and formats explanation as text data.

from eli5.formatters import format_as_text

print(format_as_text(explanation))

Format Output as HTML "format_as_html()"¶

The format_as_html() method takes as input explanation object and returns explanation as HTML stored in a python string. We can then display this string HTML using IPython's display functionality.

from eli5.formatters import format_as_html

from IPython.display import HTML

html_rep = format_as_html(explanation)

HTML(data=html_rep)

2. Explain ML Model using "explain_decision_tree()" (Global Features Importances)¶

The explain_decision_tree() method available from the sklearn module of eli5 takes as input decision tree model and returns explanation object of type Explanation. We can then display this object and it'll show weights HTML table as well as decision tree as HTML.

from eli5.sklearn import explain_decision_tree

tree_explanation = explain_decision_tree(dtree, feature_names=calif_housing.feature_names)

print(type(tree_explanation))

explanation

3. Explain Individual Predictions using "explain_prediction_tree_regressor()" (Local Features Importances)¶

The explain_prediction_tree_regressor() method available as a part of the sklearn.explain_prediction module of eli5 takes as input decision tree, data sample, and return explanation object. We can then format this object with various formatters available with eli5.

from eli5.sklearn import explain_prediction

rand = random.randint(1, len(X_test))

print("Actual Target Value : ", Y_test[rand])

explanation = explain_prediction.explain_prediction_tree_regressor(dtree, X_test[rand],

feature_names=calif_housing.feature_names,

top=len(calif_housing.feature_names)

)

Format Output as Pandas DataFrame¶

Below we are formating explanation generated from the previous step as pandas dataframe.

format_as_dataframe(explanation)

4. Structured Data (Tabular) : Classification Tasks ¶

As a part of this section, we'll be explaining the usage of eli5 for the classification of structured data. We'll be loading various structured datasets, performing classification on them, and explaining predictions using eli5.

If you want to learn about logistic regression ML model available from scikit-learn then please feel free to check below link.

4.1. Binary Classification: Logistic Regression¶

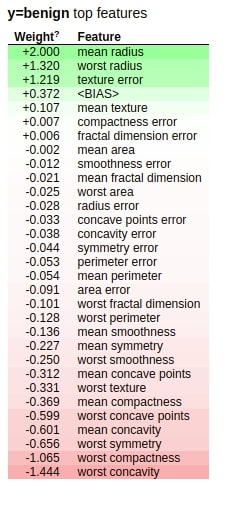

The first example that we'll use for explaining the usage of eli5 for classification tasks uses a breast cancer dataset available from scikit-learn.

4.1.1 Load Dataset, Train Model and Evaluate ML Metrics¶

We have loaded the dataset and printed a description that explains dataset features and the target variable. The target, in this case, is a binary variable specifying whether the tumor is malignant or benign. We have then divided the dataset into train/test sets, fitted logistic regression on it, and printed classification metrics like accuracy, confusion matrix, and classification report of test data.

from sklearn.datasets import load_breast_cancer

from sklearn.metrics import classification_report, confusion_matrix

from sklearn.linear_model import LogisticRegression

breast_cancer = load_breast_cancer()

for line in breast_cancer.DESCR.split("\n")[5:32]:

print(line)

X, Y = breast_cancer.data, breast_cancer.target

print("\nData Size : ", X.shape, Y.shape)

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, train_size=0.90, test_size=0.1, stratify=Y, random_state=123)

print("Train/Test Sizes : ", X_train.shape, X_test.shape, Y_train.shape, Y_test.shape)

lr = LogisticRegression()

lr.fit(X_train, Y_train)

print("Test Accuracy : %.2f"%lr.score(X_test, Y_test))

print("Train Accuracy : %.2f"%lr.score(X_train, Y_train))

print()

print("Confusion Matrix : ")

print(confusion_matrix(Y_test, lr.predict(X_test)))

print()

print("Classification Report")

print(classification_report(Y_test, lr.predict(X_test)))

4.1.2 Explain ML Model and Predictions using "Eli5" ("show_*()" Methods)¶

1. Explain ML Model using "show_weights()" (Global Features Importances)¶

Below we have plotted weights of the model using the show_weights() method. We have passed target names as well this time to method.

show_weights(lr,

targets=[0, 1], target_names=breast_cancer.target_names,

feature_names=breast_cancer.feature_names,

top=len(breast_cancer.feature_names)+1)

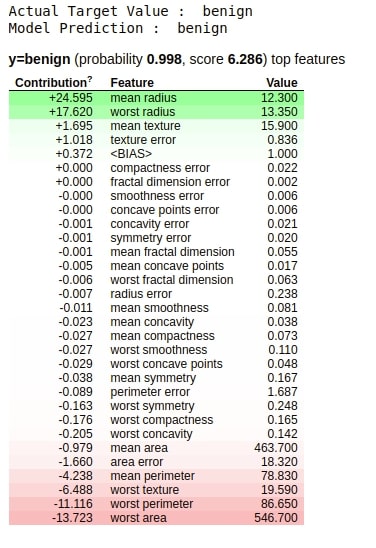

2. Explain Individual Predictions using "show_prediction()" (Local Features Importances)¶

Explaining Correct Prediction¶

Below we are using the show_prediction() method to show the contribution of features in the prediction of the random sample chosen from the test dataset.

rand = random.randint(1, len(X_test))

print("Actual Target Value : ", breast_cancer.target_names[Y_test[rand]])

print("Model Prediction : ", breast_cancer.target_names[lr.predict(X_test[rand].reshape(1,-1))[0]])

show_prediction(lr, X_test[rand],

targets=[0, 1], target_names=breast_cancer.target_names,

feature_names=breast_cancer.feature_names,

show_feature_values=True)

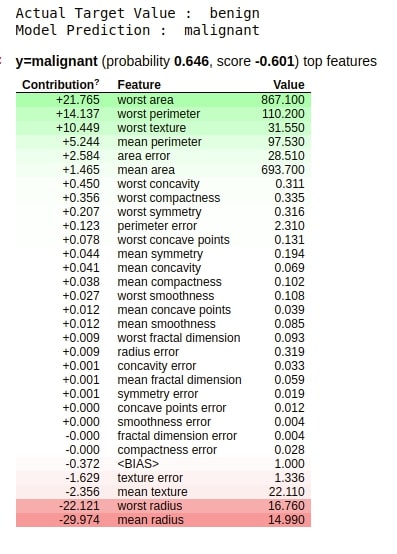

Explaining Incorrect Prediction¶

Below we have displayed another example for show_prediction() but this time we have chosen a sample randomly from the test dataset which was predicted wrong by the model.

preds = lr.predict(X_test)

false_preds = np.argwhere((preds != Y_test)).flatten()

rand = random.choice(false_preds)

print("Actual Target Value : ", breast_cancer.target_names[Y_test[rand]])

print("Model Prediction : ", breast_cancer.target_names[lr.predict(X_test[rand].reshape(1,-1))[0]])

show_prediction(lr, X_test[rand],

targets=[0, 1], target_names=breast_cancer.target_names,

feature_names=breast_cancer.feature_names,

show_feature_values=True)

4.2. MultiClass Classification: Logistic Regression ¶

NOTE: Please feel free to skip this section if you have properly understood how to use "Eli5" for classification tasks using our previous binary classification example. You can skim through this section as well.

The second example that we'll use for explaining the usage of eli5 for the classification task uses a wine dataset that has information about various ingredients used in the creation of three different types of wines. It's a multi-class classification problem.

4.2.1 Load Dataset, Train Model and Evaluate ML Metrics¶

Below, we have loaded wine dataset from sklearn and divided the dataset into train/test sets, fitted model on train data, and printed various classification metrics like accuracy, confusion matrix, and classification report calculated on test data.

from sklearn.datasets import load_wine

wine = load_wine()

X, Y = wine.data, wine.target

print("Data : ", X.shape, Y.shape)

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, train_size=0.90, test_size=0.1, stratify=Y, random_state=123)

print("Train/Test Split : ", X_train.shape, X_test.shape, Y_train.shape, Y_test.shape)

lr = LogisticRegression()

lr.fit(X_train, Y_train)

print("Test Accuracy : %.2f"%lr.score(X_test, Y_test))

print("Train Accuracy : %.2f"%lr.score(X_train, Y_train))

print("Confusion Matrix : ")

print(confusion_matrix(Y_test, lr.predict(X_test)))

print()

print("Classification Report")

print(classification_report(Y_test, lr.predict(X_test)))

4.2.2 Explain ML Model and Predictions using "Eli5" ("show_*()" Methods)¶

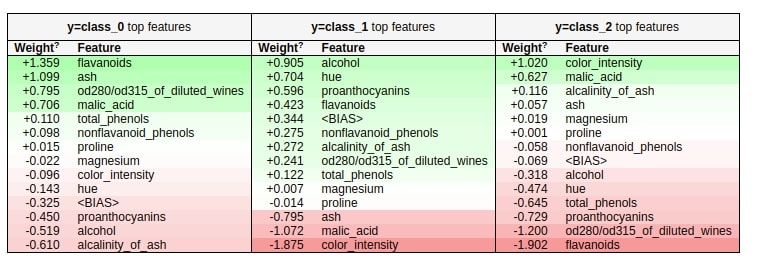

1. Explain ML Model using "show_weights()" (Global Features Importances)¶

Below we are using the show_weights() method to plot a chart explaining weights for multi-class classification problems. We can see three different tables, one for each class of wine type.

show_weights(lr,

targets=[0, 1, 2], target_names=wine.target_names,

feature_names=wine.feature_names)

2. Explain Individual Predictions using "show_prediction()" (Local Features Importances)¶

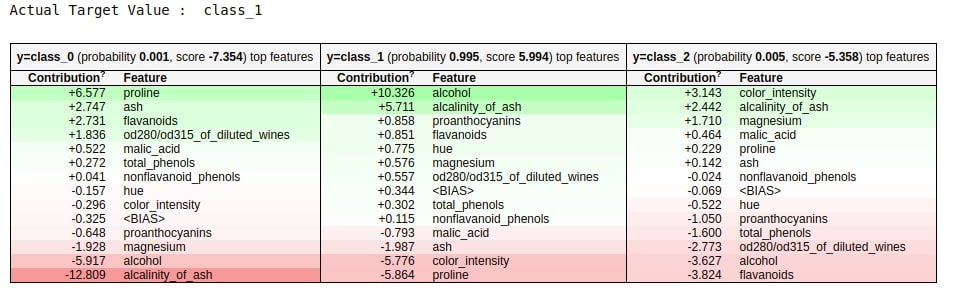

Below we have used the show_prediction() method for explaining how different features contribute to the prediction of a random sample from the test dataset.

We can notice that there are three tables in this case as well, one per class. If we sum up the values of individual features and BIAS in each table, whichever is highest will be class predicted by the model.

rand = random.randint(1, len(X_test))

print("Actual Target Value : ", wine.target_names[Y_test[rand]])

show_prediction(lr, X_test[rand],

targets=[0, 1, 2], target_names=wine.target_names,

feature_names=wine.feature_names)

Change Layout of Table¶

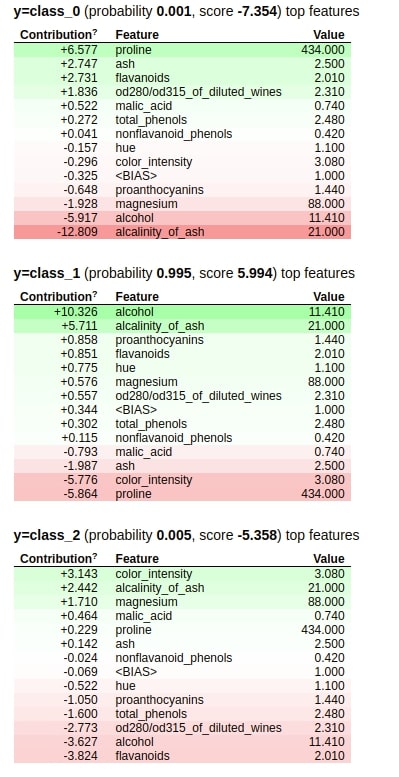

Below we have shown another example of show_prediction() this time but we have lined up tables vertically because we wanted to show actual feature values as well in table and tables were overlapping.

show_prediction(lr, X_test[rand],

targets=[0, 1, 2], target_names=wine.target_names,

feature_names=wine.feature_names,

horizontal_layout=False,

show_feature_values=True)

4.3. Decision Tree ¶

As a part of our third example for explaining the classification task on structured data will use the IRIS flowers dataset available from scikit-learn. It has information about measurements of three different types of IRIS flowers.

4.3.1 Load Dataset, Train Model and Evaluate ML Metrics¶

We'll be loading dataset, dividing it into train/test sets, fitting a decision tree to train data, and printing various classification metrics evaluated on the test dataset.

from sklearn.datasets import load_iris

from sklearn.tree import DecisionTreeClassifier

from sklearn.model_selection import train_test_split

from sklearn.metrics import confusion_matrix, classification_report

iris = load_iris()

for line in iris.DESCR.split("\n")[5:19]:

print(line)

X, Y = iris.data, iris.target

print("\nData Size : ", X.shape, Y.shape)

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, train_size=0.90, test_size=0.1, stratify=Y, random_state=123)

print("Train/Test Split : ", X_train.shape, X_test.shape, Y_train.shape, Y_test.shape)

dtree = DecisionTreeClassifier(max_depth=None, max_features="log2")

dtree.fit(X_train, Y_train)

print("Test Accuracy : %.2f"%dtree.score(X_test, Y_test))

print("Train Accuracy : %.2f"%dtree.score(X_train, Y_train))

print()

print("Confusion Matrix : ")

print(confusion_matrix(Y_test, dtree.predict(X_test)))

print()

print("Classification Report")

print(classification_report(Y_test, dtree.predict(X_test)))

4.3.2 Explain Predictions using "Eli5"¶

1. Explain ML Model using "show_weights()" (Global Features Importances)¶

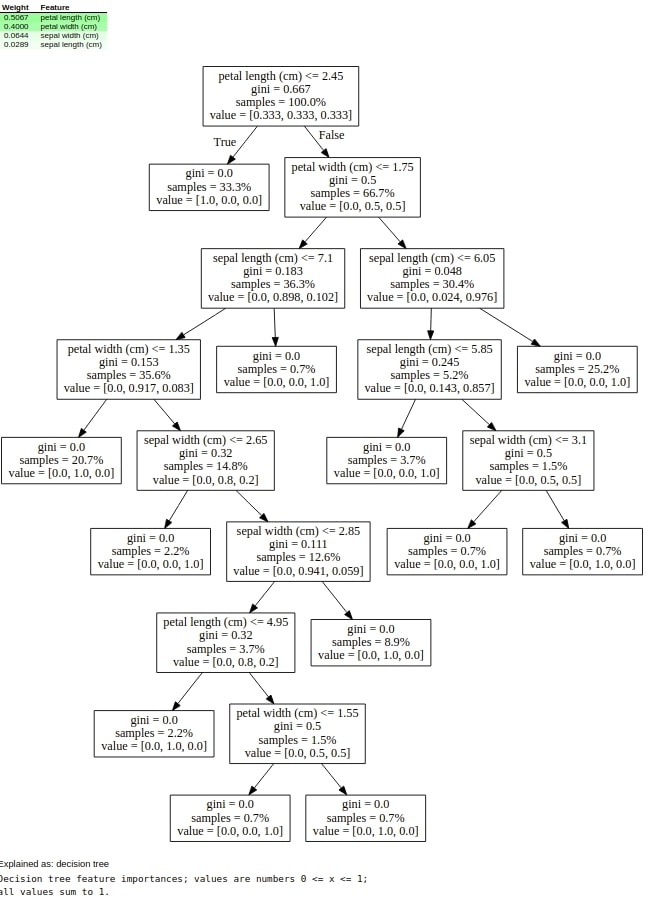

Below we are using the show_weights() method to plot weights importance, decision tree, the method used by ML algorithm for prediction, and description of the algorithm.

show_weights(dtree, feature_names=iris.feature_names,

show=["feature_importances", "decision_tree", "method", "description"])

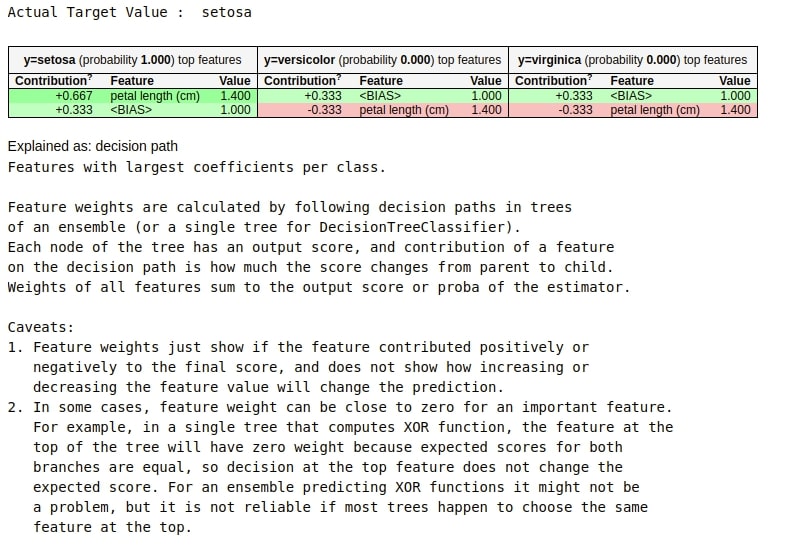

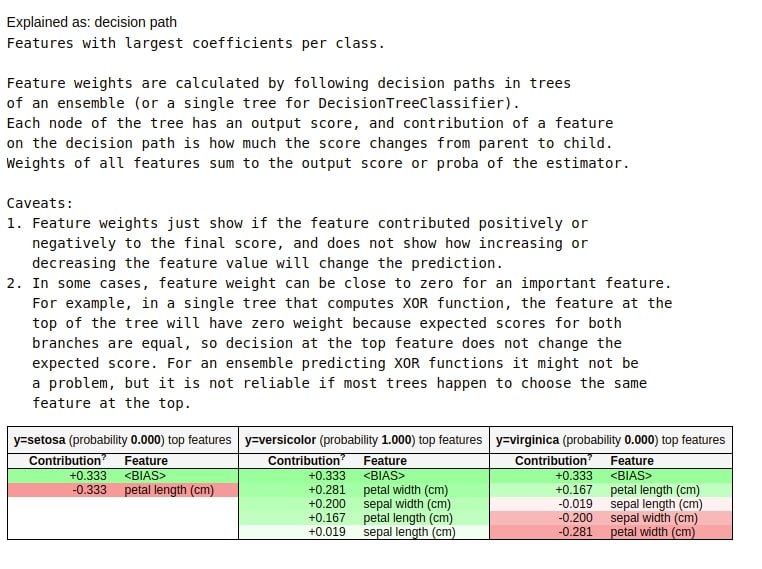

2. Explain Individual Predictions using "show_prediction()" (Local Features Importances)¶

Below we are using a random sample from the test dataset and plotting the contribution of individual features in predicting its class.

rand = random.randint(1, len(X_test))

print("Actual Target Value : ", iris.target_names[Y_test[rand]])

show_prediction(dtree,

X_test[rand],

feature_names=iris.feature_names,

targets=[0,1,2], target_names=iris.target_names,

show_feature_values=True,

show=["targets", "method", "description"]

)

3. Explain ML Model using "explain_weights_sklearn()" (Global Features Importances)¶

We'll now generate a few explanation objects and format explanations generated by eli5 in a different way.

Below we are generating the eli5 Explanation object by using the explain_weights_sklearn() method passing it decision tree, feature names, and target names.

from eli5.sklearn import explain_weights_sklearn

explanation = explain_weights_sklearn(dtree, feature_names=iris.feature_names, target_names=iris.feature_names)

Format Output as DataFrame¶

Below we are formating an explanation object generated from the previous cell as pandas dataframe.

from eli5.formatters import format_as_dataframe, format_as_dataframes

format_as_dataframe(explanation)

Format Explanation as Text¶

Below we are formatting explanation generated earlier as text using format_as_text() method. It gives us an explanation of how we are coming to a particular prediction based on feature values.

from eli5.formatters import format_as_text

print(format_as_text(explanation))

Format Output as HTML¶

Below we have plotted the explanation as HTML.

from eli5.formatters import format_as_html

from IPython.display import HTML

html_rep = format_as_html(explanation)

HTML(data=html_rep)

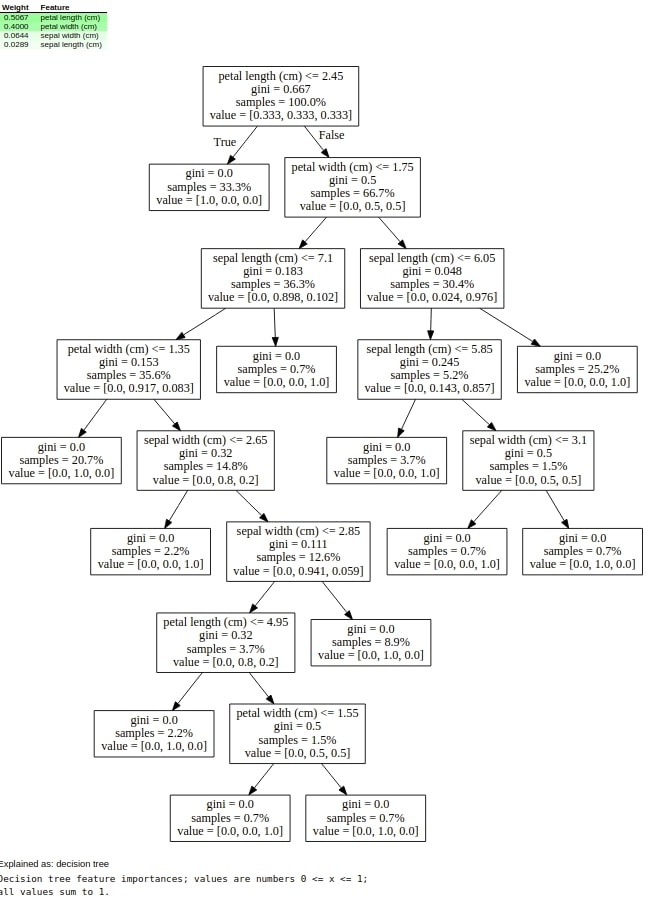

4. Explain ML Model using "explain_decision_tree()" (Global Features Importances)¶

Below we are plotting feature importance and decision tree as HTML using the explain_decision_tree() method of eli5.

from eli5.sklearn import explain_weights_sklearn, explain_decision_tree

explain_decision_tree(dtree, feature_names=iris.feature_names)

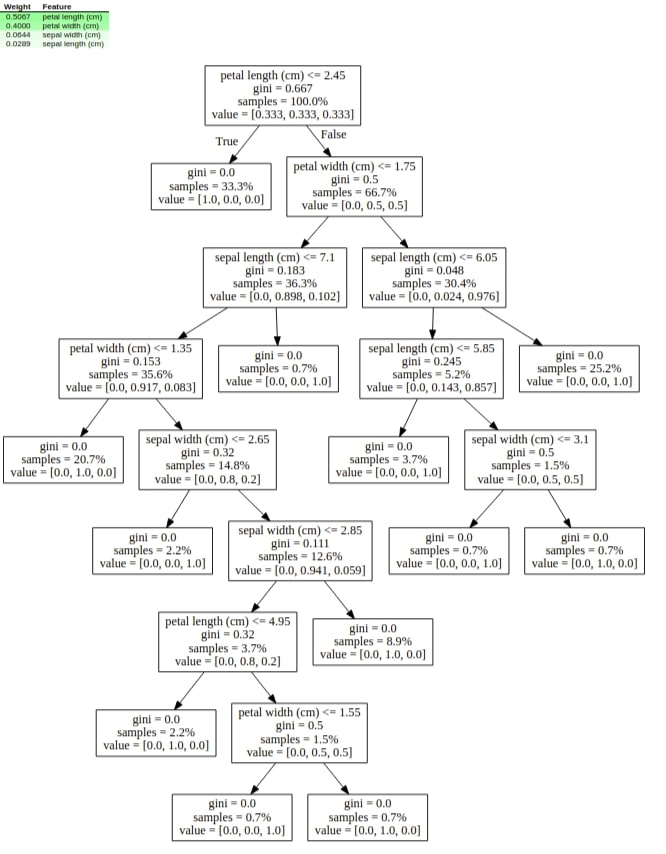

5. Explain Individual Predictions using "explain_prediction()" (Local Features Importances)¶

Below we are generating an explanation of random sample from test data using the explain_prediction() method of sklearn.explain_prediction module of eli5.

from eli5.sklearn import explain_prediction

rand = random.randint(1, len(X_test))

print("Actual Target Value : ", iris.target_names[Y_test[rand]])

explanation = explain_prediction.explain_prediction_tree_classifier(dtree, X_test[rand],

targets=[0,1,2], target_names=iris.target_names,

feature_names=iris.feature_names

)

Format Explanation as HTML¶

Below we are plotting explanation generated for a random sample from the previous cell as HTML.

from eli5.formatters import format_as_html

from IPython.display import HTML

HTML(format_as_html(explanation))

5. Unstructured Data (Text) : Classification ¶

As a part of this section, we'll be explaining how to use eli5 explanation to explain the model trained on unstructured text data. If you want to learn in-depth about various scikit-learn estimators used as a part of this section then please feel free to check our tutorial on the same.

5.1. Random Forest with Word-Level Tokenization¶

The dataset that we'll use for explaining the usage of eli5 with unstructured data (text) is the spam/ham messages dataset available from UCI.

If you want to learn about random forest ML model available from scikit-learn then please feel free to check below link.

5.1.1 Download Dataset¶

Below we are downloading it from the UCI repository and then unzipping it.

!wget https://archive.ics.uci.edu/ml/machine-learning-databases/00228/smsspamcollection.zip

!unzip smsspamcollection.zip

Below we are loading the spam/ham dataset by splitting each line of the file.

with open('SMSSpamCollection') as f:

data = [line.strip().split('\t') for line in f.readlines()]

y, text = zip(*data)

We can count a number of spam and ham samples by passing target values to Counter from the collections library.

import collections

collections.Counter(y)

5.1.2 Vectorize Data, Train Model and Evaluate ML Metrics¶

All scikit-learn model expects input data to be a matrix of float values. The dataset that we have is a list of strings. We'll be transforming this dataset of text to the matrix of floats using the TfIdfVectorizer class of scikit-learn which calculates tf-idf values for each word of text and creates a matrix based on words present in each sample.

We'll start by dividing the dataset into train/test sets, transform datasets using TfIdfVectorizer, fit random forest classifier on train data and print various classification metrics like accuracy, confusion matrix, and classification report evaluated on the test dataset.

If you are interested in learning about the inner workings of TfIdfVectorizer then please feel free to visit our tutorial on feature extraction from text data using scikit-learn where we explain it in-depth.

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import confusion_matrix, classification_report

text_train, text_test, y_train, y_test = train_test_split(text, y,

random_state=42,

test_size=0.25,

stratify=y)

tfidf_vectorizer = TfidfVectorizer(analyzer="word", stop_words='english')

tfidf_vectorizer.fit(text_train)

X_train_tfidf = tfidf_vectorizer.transform(text_train)

X_test_tfidf = tfidf_vectorizer.transform(text_test)

print("Train/Test Vector Size : ", X_train_tfidf.shape, X_test_tfidf.shape)

rf = RandomForestClassifier()

rf.fit(X_train_tfidf, y_train)

print("Test Accuracy : %.2f"%rf.score(X_test_tfidf, y_test))

print("Train Accuracy : %.2f"%rf.score(X_train_tfidf, y_train))

print()

print("Confusion Matrix : ")

print(confusion_matrix(y_test, rf.predict(X_test_tfidf)))

print()

print("Classification Report")

print(classification_report(y_test, rf.predict(X_test_tfidf)))

5.1.3 Explain Predictions using "Eli5"¶

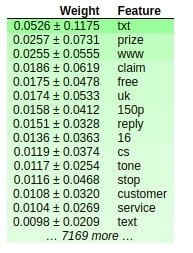

1. Explain ML Model using "show_weights()" (Global Features Importances)¶

Below we are plotting weights using the show_weights() method but unlike previous examples on structured data, this time we are plotting weights of a words text corpus. In order to show the mapping between words of text and weights, we need to pass the TF-IDF vectorizer created previous cell to vec parameter.

We can also pass list returned by get_feature_names() method of tfidf vectorizer to feature_names parameter of show_weights() method and it'll work as well.

eli5.show_weights(rf, vec=tfidf_vectorizer, targets=[0,1], target_names=["ham", "spam"], top=15)

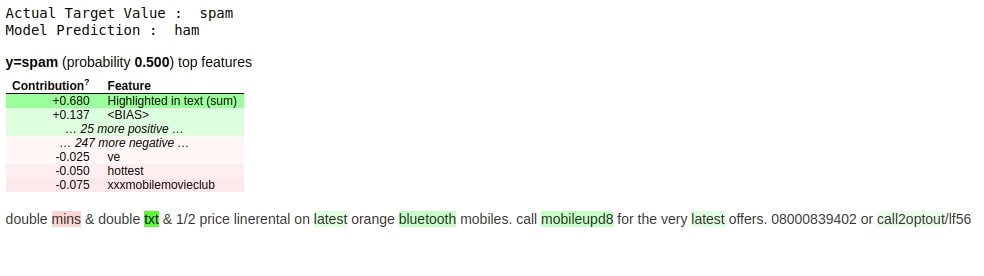

2. Explain Individual Predictions using "show_prediction()" (Local Features Importances)¶

Below we are plotting the contribution of individual words from a random test sample in predicting the class of that sample. We can also note that the original text message is shown along with which words contributed most to prediction are highlighted as well.

preds = rf.predict(X_test_tfidf)

false_preds = np.argwhere((preds != y_test)).flatten()

rand = random.choice(false_preds)

print("Actual Target Value : ", y_test[rand])

print("Model Prediction : ", rf.predict(X_test_tfidf[rand])[0])

eli5.show_prediction(rf, text_test[rand], vec=tfidf_vectorizer, target_names=["ham", "spam"], top=10)

5.2. Random Forest with Character-Level Tokenization ¶

The second example that we'll use for explaining unstructured text data uses the same data of spam/ham messages. But this time, we are using the TF-IDF vectorizer which is based on the character of length 3-5. All other steps are the same as the previous examples except change in the way TF-IDF transforms text data to float matrix.

5.2.1 Vectorize Data, Train Model and Evaluate ML Metrics¶

tfidf_vectorizer = TfidfVectorizer(analyzer="char", stop_words='english', ngram_range=(3,5))

tfidf_vectorizer.fit(text_train)

X_train_tfidf = tfidf_vectorizer.transform(text_train)

X_test_tfidf = tfidf_vectorizer.transform(text_test)

print("Train/Test Vector Size : ", X_train_tfidf.shape, X_test_tfidf.shape)

rf = RandomForestClassifier()

rf.fit(X_train_tfidf, y_train)

print("Test Accuracy : %.2f"%rf.score(X_test_tfidf, y_test))

print("Train Accuracy : %.2f"%rf.score(X_train_tfidf, y_train))

print()

print("Confusion Matrix : ")

print(confusion_matrix(y_test, rf.predict(X_test_tfidf)))

print()

print("Classification Report")

print(classification_report(y_test, rf.predict(X_test_tfidf)))

5.2.2 Explain Predictions using "Eli5"¶

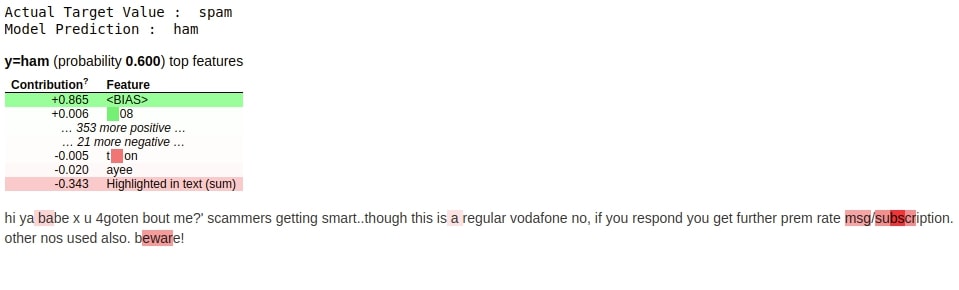

1. Explain Individual Predictions using "show_prediction()" (Local Features Importances)¶

Below we are plotting a random sample from test data that was predicted wrong by the model and highlighting which n-grams contributed most to that prediction.

preds = rf.predict(X_test_tfidf)

false_preds = np.argwhere((preds != y_test)).flatten()

rand = random.choice(false_preds)

print("Actual Target Value : ", y_test[rand])

print("Model Prediction : ", rf.predict(X_test_tfidf[rand])[0])

eli5.show_prediction(rf, text_test[rand], vec=tfidf_vectorizer, target_names=["ham", "spam"], top=10)

This ends our small tutorial explaining how to use Python library "Eli5" to explain ML Models and their predictions. We primarily concentrated on scikit-learn as a part of this tutorial but same code can be used for other supported libraries as well. Tutorial explained the usage of a library with structured and unstructured data as well as for classification and regression tasks.

References ¶

1. How to Use Eli5 with Deep Neural Networks?¶

Image Classification¶

Text Classification¶

- Eli5.lime: Explain PyTorch Text Classification Network Predictions Using LIME Algorithm

- Eli5.lime: Explain Flax (JAX) Text Classifier Predictions Using LIME

2. Other Python Libraries to Interpret Predictions Of ML Models¶

3. Python Libraries to Visualize ML Metrics (Confusion Matrix, ROC Curve, etc)¶

Sunny Solanki

Sunny Solanki

![YouTube Subscribe]() Comfortable Learning through Video Tutorials?

Comfortable Learning through Video Tutorials?

If you are more comfortable learning through video tutorials then we would recommend that you subscribe to our YouTube channel.

![Need Help]() Stuck Somewhere? Need Help with Coding? Have Doubts About the Topic/Code?

Stuck Somewhere? Need Help with Coding? Have Doubts About the Topic/Code?

When going through coding examples, it's quite common to have doubts and errors.

If you have doubts about some code examples or are stuck somewhere when trying our code, send us an email at coderzcolumn07@gmail.com. We'll help you or point you in the direction where you can find a solution to your problem.

You can even send us a mail if you are trying something new and need guidance regarding coding. We'll try to respond as soon as possible.

![Share Views]() Want to Share Your Views? Have Any Suggestions?

Want to Share Your Views? Have Any Suggestions?

If you want to

- provide some suggestions on topic

- share your views

- include some details in tutorial

- suggest some new topics on which we should create tutorials/blogs

Comfortable Learning through Video Tutorials?

Comfortable Learning through Video Tutorials? Stuck Somewhere? Need Help with Coding? Have Doubts About the Topic/Code?

Stuck Somewhere? Need Help with Coding? Have Doubts About the Topic/Code? Want to Share Your Views? Have Any Suggestions?

Want to Share Your Views? Have Any Suggestions? eli5, interpret-ml-models

eli5, interpret-ml-models