Artificial Intelligence Tutorials

Artificial intelligence (AI) is the emulation of human intelligence in devices that have been designed to behave and think like humans. The phrase can also be used to refer to any computer that demonstrates characteristics of the human intellect, like learning and problem-solving. Through CoderzColumn Ai tutorials, you will learn to code for these concepts:

- Python Deep Learning Libraries

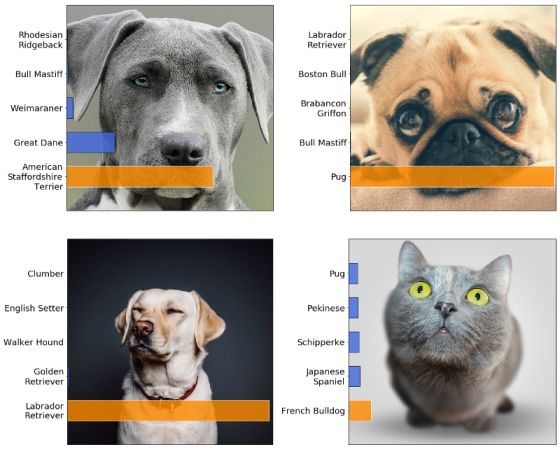

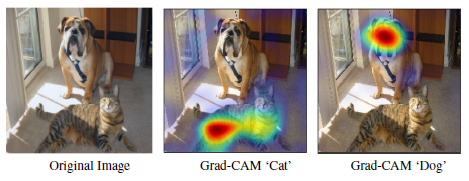

- Image Classification

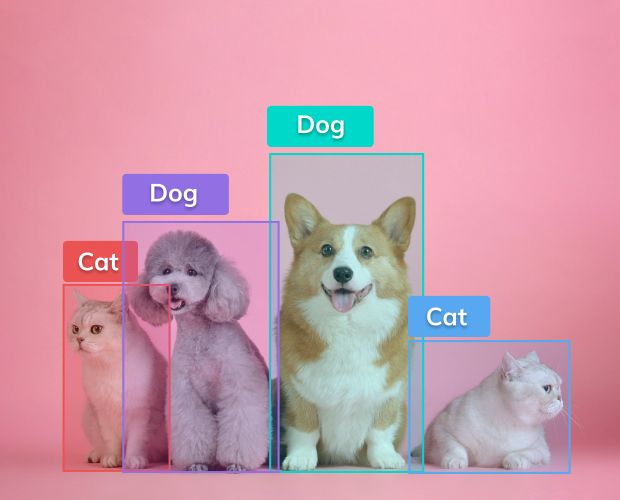

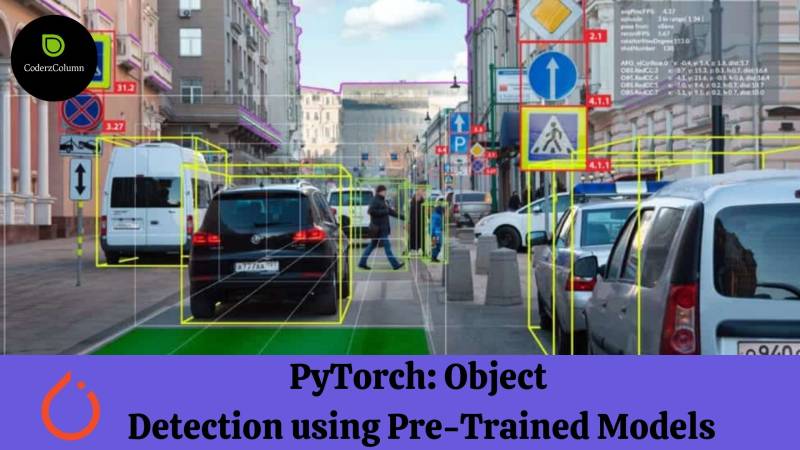

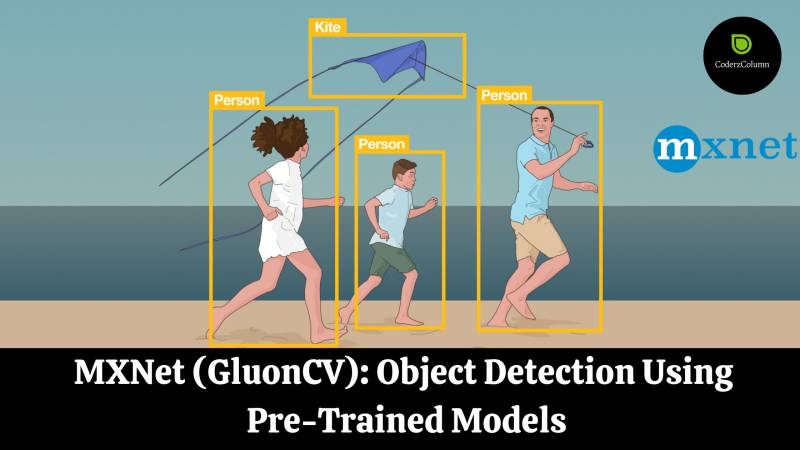

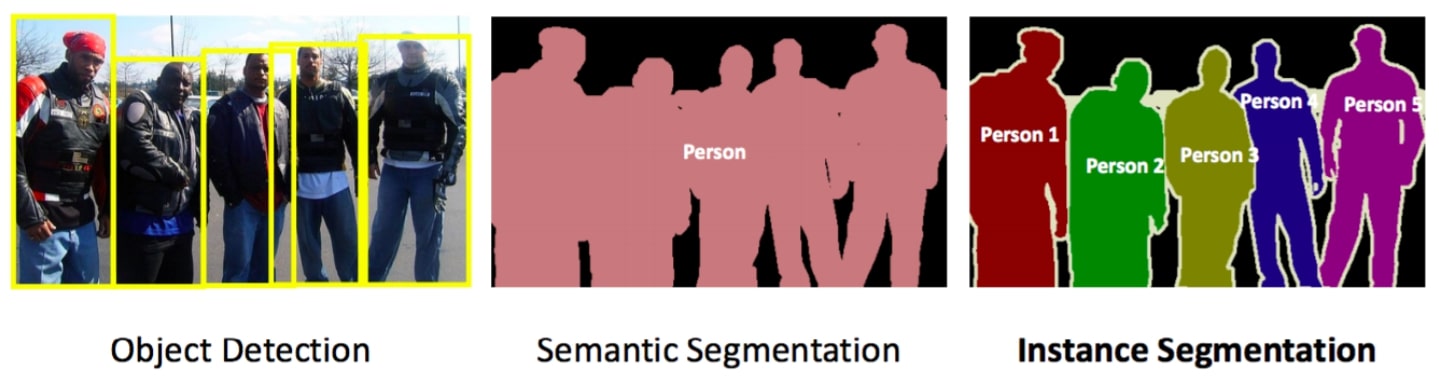

- Object Detection

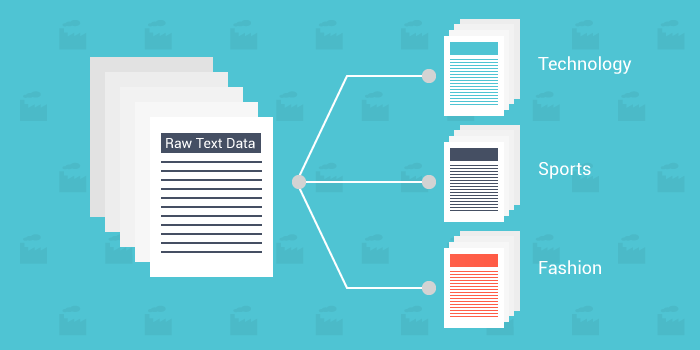

- Text Classification

- Text Generation

- Convolutional Neural Networks (CNNs)

- Recurrent Neural Networks (RNNs)

- Interpret Predictions Of Deep Neural Network Models

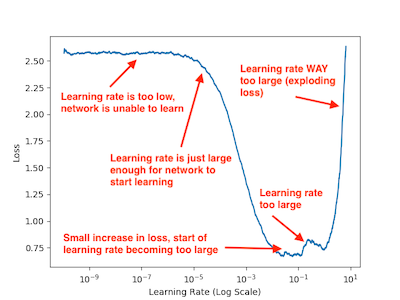

- Learning Rate Schedulers

- Hyperparameters Tuning

- Transfer Learning

- Word Embeddings (GloVe Embeddings, FastText, etc)

- RNNs (LSTMs) for Time Series

- Text Classification using RNNs, LSTM & CNNs

For an in-depth understanding of the above concepts, check out the sections below.

Sunny Solanki

Sunny Solanki