Guide to Use GloVe Embeddings with MXNet Networks (GluonNLP)¶

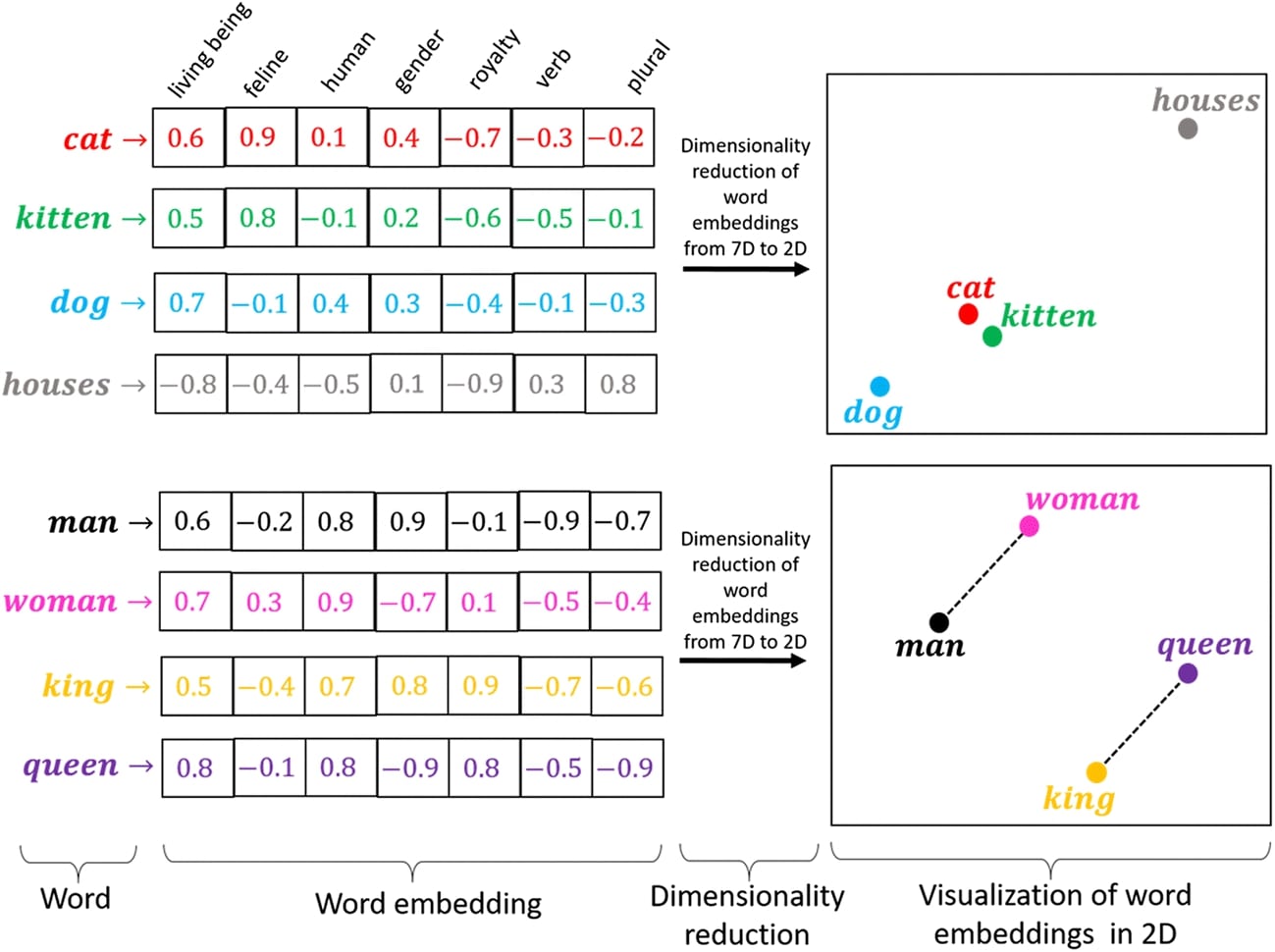

When working with text data for machine learning tasks, we need to encode text data. The encoding is the process where we map text data to real-valued data because ML algorithms can only work on them. There are many different ways to encode text data like word frequency, one-hot encoding, Tf-Idf, word embeddings, etc. All these approaches break the text down into a list of tokens (words) and then assign real values to these tokens. The majority of approaches assign just a single value to each token except word embeddings approach. The word embeddings approach assigns a real-valued vector to each token. This kind of encoding/representation gives more flexibility to represent each token (word). It can now capture more than one meaning of words possible in a different context.

GloVe (Global Vectors) is an unsupervised learning algorithm that can help us generate word embeddings from the corpus of data. The Stanford University professors have already developed GloVe embeddings of different lengths by training an unsupervised algorithm on different big datasets (Wikipedia, Twitter, etc) which we can use for our NLP tasks (text classification). Please check the below link if you want to learn more about GloVe.

As a part of this tutorial, we have explained how we can use GloVe embeddings (kind of word embeddings) with a neural network designed with Python deep learning library MXNet for text classification tasks. The GloVe embeddings is available from GluonNLP Python library which is a helper library of MXNet for NLP tasks. We have explained different approaches to using embeddings. After training networks, we evaluated their performances for comparison purposes. We have also tried to explain predictions made by networks using LIME algorithm which is commonly used for black-box models.

Below, we have listed important sections of Tutorial to give an overview of the material covered.

Important Sections Of Tutorial¶

- Prepare Data

- 1.1 Load Dataset

- 1.2 Define Tokenizer

- 1.3 Populate Vocabulary

- 1.4 Define Vectorization Function

- 1.5 Create Data Loaders

- Approach 1: GloVe '840B' Flattened (Max Tokens=50, Embeddings Length=300)

- Load GloVe 840B Embeddings

- Create Embeddings Matrix of Vocab Tokens using Glove Embeddings

- Define Network

- Train Network

- Evaluate Network Performance

- Explain Predictions using LIME Algorithm

- Approach 2: GloVe '840B' Averaged (Max Tokens=50, Embeddings Length=300)

- Approach 3: GloVe '840B' Summed (Max Tokens=50, Embeddings Length=300)

- Approach 4: GloVe '42B' Flattened (Max Tokens=50, Embeddings Length=300)

- Results Summary and Further Recommendations

Below, we have imported the necessary Python libraries and printed the versions we used in our tutorial.

import mxnet

print("MXNet Version : {}".format(mxnet.__version__))

import gluonnlp

print("GluonNLP Version : {}".format(gluonnlp.__version__))

import torchtext

print("TorchText Version : ".format(torchtext.__version__))

import gc

1. Prepare Data ¶

In this section, we are preparing data to be given to the neural network. We'll be using GloVe word embeddings approach to encoding our text data. In order to use GloVe with our text data, we need to perform the below steps.

- Loop through each text example of the dataset, tokenize them and populate the vocabulary of all unique tokens (words). A vocabulary is a simple mapping from tokens to integer index. Each token is assigned a unique index starting from integer 0.

- Tokenize each text example and retrieve indexes of tokens from the vocabulary.

- Retrieve GloVe embeddings for each token of vocabulary and create an embedding matrix.

- Set this matrix as the weight of the embedding layer of the neural network and prevent updating weights of this layer so that embeddings do not get updated. This embedding layer will be responsible for retrieving GloVe embeddings of tokens based on input indexes created in the 2nd step by indexing the weight matrix.

So basically we first map tokens to their indexes and then retrieve GloVe embeddings for these indexes by indexing the weight matrix of the embedding layer.

The first two steps are implemented in this section. The 3rd step is implemented in the next section and the 4th step will be implemented as the Embedding layer of the network.

The below image gives a simple idea about word embeddings.

1.1 Load Dataset¶

In this section, we have loaded the dataset that we are going to use for our text classification task. We have loaded AG NEWS dataset available from torchtext python library. The dataset has text documents for 4 different news categories (["World", "Sports", "Business", "Sci/Tech"]). The dataset is already divided into train and test sets. We have wrapped text examples and their respective target labels in ArrayDataset wrapper available from mxnet.gluon.data. This dataset object will be used later to create data loaders that will be used during the training process to loop through data in batches.

from mxnet.gluon.data import ArrayDataset

train_dataset, test_dataset = torchtext.datasets.AG_NEWS()

Y_train, X_train = zip(*list(train_dataset))

Y_test, X_test = zip(*list(test_dataset))

train_dataset = ArrayDataset(X_train, Y_train)

test_dataset = ArrayDataset(X_test, Y_test)

1.2 Define Tokenizer¶

In this section, we have defined a tokenizer. The tokenizer is a function that takes a text document as input and returns a list of tokens (words). We have defined a tokenizer using regular expression which captures a sequence of one or more characters in a text document. The tokenizer is wrapped in partial() function available from Python library functools. We have also explained with a simple example how tokenizer tokenizes text example.

import re

from functools import partial

tokenizer = partial(lambda X: re.findall(r"\w+", X))

tokenizer("Hello, How are you?")

1.3 Populate Vocabulary¶

In this section, we have populated a vocabulary using all unique tokens of datasets. In order to create vocabulary, we first need to create a dictionary that has all tokens as keys and their frequency in datasets as values. We have maintained this dictionary as Counter object available from the Python library collections. Initially, we have created an empty Counter object. Then, we are looping through each dataset and their text examples one by one calling count_tokens() function available from the Python library gluonnlp. The function takes a list of tokens of text example and Counter object. It updates the Counter object with tokens and their frequencies. After we have looped through all text examples of the dataset, the Counter object has all tokens. We can then create vocabulary by calling Vocab() constructor from the Python library gluonnlp. We have provided a counter object to the constructor for token details.

After initializing the vocabulary, we have also printed the number of tokens present in the vocabulary.

from collections import Counter

counter = Counter()

for dataset in [train_dataset, test_dataset]:

for X, Y in dataset:

gluonnlp.data.count_tokens(tokenizer(X), to_lower=True, counter=counter)

vocab = gluonnlp.Vocab(counter=counter, min_freq=1)

print("Vocabulary Size : {}".format(len(vocab)))

1.4 Define Vectorization Function¶

In this section, we have defined a vectorization function. This function will be used by data loaders later. It takes a batch of text examples and their target labels as input. Then, it tokenizes text examples and retrieves their indexes from the vocabulary. The different text documents have a different number of tokens but the neural network requires consistent size. Hence, we have set maximum token size per example to 50. All examples will have fixed 50 tokens. Those examples that have more than 50 tokens will be truncated to keep only 50 tokens and the examples that have less than 50 tokens will be padded with 0s to bring their length to 50 tokens. In the end, MXNet ndarray of token indexes for text examples and their target labels will be returned from the function.

After defining a function, we have also explained the usage with a simple example.

In this section, we have set the embedding length to 300 because we are going to use GloVe 840B embedding which has word embedding of length 300 for each token.

import gluonnlp.data.batchify as bf

from mxnet import nd

import numpy as np

max_tokens = 50

embed_len = 300

clip_seq = gluonnlp.data.ClipSequence(max_tokens)

pad_seq = gluonnlp.data.PadSequence(length=max_tokens, pad_val=0, clip=True)

def vectorize(batch):

X, Y = list(zip(*batch))

X = [[vocab(word) for word in tokenizer(text)] for text in X]

#X = [text+([""]* (max_words-len(text))) if len(text)<max_words else text[:max_words] for text in X] ## Bringing all samples to 50 words length.

X = [pad_seq(tokens) for tokens in X] ## Bringing all samples to 50 length

return nd.array(X, dtype=np.int32), nd.array(Y, dtype=np.int32) - 1 # Subtracting 1 from labels to bring them in range 0-3 from 1-4

%time X, Y = vectorize([["how are you", 1]])

X.shape, Y.shape

1.5 Create Data Loaders¶

In this section, we have simply created train and test data loaders using datasets we had created earlier. These data loaders will be used during the training process to loop through data in batches. The batch size is set at 1024 which means that a single batch will have 1024 examples and their labels. We have also provided the vectorization function we created in the previous cell to batchify_fn parameter. This function will be applied to each batch of data and the output of it will be a single batch of data.

Below, we have explained with a simple example how text example is vectorized.

text = "Hello, How are you? Where are you planning to go?"

tokens = ['hello', ',', 'how', 'are', 'you', '?', 'where',

'are', 'you', 'planning', 'to', 'go', '?']

vocab = {

'hello': 0,

'bye': 1,

'how': 2,

'the': 3,

'welcome': 4,

'are': 5,

'you': 6,

'to': 7,

'<unk>': 8,

}

vector = [0,8,2,4,6,8,8,5,6,8,7,8,8]from mxnet.gluon.data import ArrayDataset, DataLoader

train_loader = DataLoader(train_dataset, batch_size=1024, batchify_fn=vectorize)

test_loader = DataLoader(test_dataset, batch_size=1024, batchify_fn=vectorize)

target_classes = ["World", "Sports", "Business", "Sci/Tech"]

for X, Y in train_loader:

print(X.shape, Y.shape)

break

Approach 1: GloVe '840B' Flattened (Max Tokens=50, Embeddings Length=300) ¶

Our first approach used GloVe 840B word embeddings. The 840B embeddings has embeddings for 2.2 Million tokens. The embedding length for each token is 300. We'll retrieve embeddings for our populated vocabulary tokens from this 840B and then use it for our text classification task. As these are already trained embeddings, we'll freeze the embedding layer that prevents it from updating.

Below, we have simply listed different GloVe embeddings available.

gluonnlp.embedding.list_sources(embedding_name="Glove")

Load GloVe 840B Embeddings¶

The glove embedding is available from gluonnlp library. We just need to create an instance of GloVe() constructor with embedding name ('glove.840B.300d') and it'll create an GloVe instance. This instance can be treated like a dictionary. We can retrieve embeddings of tokens from it which we have explained in the example below.

glove_embeddings = gluonnlp.embedding.GloVe(source='glove.840B.300d')

glove_embeddings

glove_embeddings["hello"].shape, glove_embeddings["hello"][:50]

glove_embeddings["<unk>"], glove_embeddings[""]

Create Embeddings Matrix of Vocab Tokens using Glove Embeddings¶

In this section, we are simply looping through tokens of our populated vocabulary (from our datasets) and retrieving GloVe embeddings for tokens using GloVe object. We have wrapped all embeddings in a matrix of shape (vocab_len, 300) = (66505, 300). This matrix will become the weight matrix of the embedding layer.

%%time

vocab_embeddings = nd.zeros((len(vocab), embed_len), dtype=np.float32)

for i, token in enumerate(vocab.idx_to_token):

vocab_embeddings[i] = glove_embeddings[token]

vocab_embeddings[5][:10]

Define Network¶

In this section, we have defined a neural network that we'll use for our text classification task. The network consists of one embedding layer and 3 dense layers.

The first layer of the network is the embedding layer. We have created an embedding layer using Embedding() constructor. We have provided vocabulary length as a number of tokens and embedding length to the constructor. After creating the layer, we have initialized its weight with our embedding matrix that we had created in the previous cell. This will make our embedding matrix weight of the embedding layer. Later on, we have to make sure that this weight matrix is not updated during the training process as they are already trained embeddings. The embedding layer will take token indexes as input and retrieve GloVe embedding by indexing this weight matrix. The input data shape of the layer is (batch_size, max_tokens) = (batch_size, 50) and output shape is (batch_size, max_tokens, embed_len) = (batch_size, 50, 300).

The output of embedding layer is flattened which will transform data shape from (batch_size, 50, 300) to (batch_size, 50 x 300) = (batch_size, 15000).

The flattened data is given to a dense layer with 128 output units. This will transform data to shape (batch_size, 128) from (batch_size, 15000). The dense layer also applies relu activation on the output.

The output of the first dense layer is given to the second dense layer that has 64 output units. This will transform the shape to (batch_size, 64). It also applies relu activation to the output.

The output of the second dense layer is given to the third and last dense layer of the network that has 4 output units (same as a number of target classes). The output of the third dense layer is a prediction of the network which is of shape (batch_size, 4).

After defining the network, we have initialized and performed a forward pass to make predictions for verification purposes. We can notice a warning saying that the embedding layer weight is already initialized and it won't be initialized again with new weights which are good. We have also printed a summary of network layer output shapes and parameter counts.

We have created a network using Sequential API of Gluon module of MXNet. Please feel free to check the below link if you are new to MXNet and want to learn how to create neural networks using it.

from mxnet.gluon import nn

class EmbeddingClassifier(nn.Block):

def __init__(self, **kwargs):

super(EmbeddingClassifier, self).__init__(**kwargs)

embed_layer = nn.Embedding(len(vocab), embed_len) ## Create Embedding Layer

embed_layer.initialize() ## Initialize layer

embed_layer.weight.set_data(vocab_embeddings) ## Initialize it with GloVe Embeddings

self.seq = nn.Sequential()

self.seq.add(embed_layer)

self.seq.add(nn.Flatten()) ### Flatten Embeddings

self.seq.add(nn.Dense(128, activation="relu"))

self.seq.add(nn.Dense(64, activation="relu"))

self.seq.add(nn.Dense(len(target_classes)))

def forward(self, x):

logits = self.seq(x)

return logits #nd.softmax(logits)

model = EmbeddingClassifier()

model

from mxnet import init, initializer

model.initialize(initializer.Xavier())

preds = model(nd.random.randint(1,len(vocab), shape=(10,max_tokens)))

preds.shape

model.summary(nd.random.randint(1,len(vocab), shape=(10,max_tokens)))

Train Network¶

In this section, we have trained the network we defined in the previous section. We have defined a function for the training network. The function takes the train object (network parameters), train data loader, validation data loader, and a number of epochs as input. It then performs a training loop number of epochs time. For each epoch, it loops through training data in batches. For each batch of data, it performs a forward pass to make predictions, calculate loss value, calculate gradients, and update network parameters using gradients. It records loss for each batch of data and prints the average loss of the train data at the end of each epoch. We have also created a helper function to calculate validation loss and accuracy values which we are printing at the end of the epochs.

from mxnet import autograd

from tqdm import tqdm

from sklearn.metrics import accuracy_score

def MakePredictions(model, val_loader):

Y_actuals, Y_preds = [], []

for X_batch, Y_batch in val_loader:

preds = model(X_batch)

preds = nd.softmax(preds)

Y_actuals.append(Y_batch)

Y_preds.append(preds.argmax(axis=-1))

Y_actuals, Y_preds = nd.concatenate(Y_actuals), nd.concatenate(Y_preds)

return Y_actuals, Y_preds

def CalcValLoss(model, val_loader):

losses = []

for X_batch, Y_batch in val_loader:

val_loss = loss_func(model(X_batch), Y_batch)

val_loss = val_loss.mean().asscalar()

losses.append(val_loss)

print("Valid CrossEntropyLoss : {:.3f}".format(np.array(losses).mean()))

def TrainModelInBatches(trainer, train_loader, val_loader, epochs):

for i in range(1, epochs+1):

losses = [] ## Record loss of each batch

for X_batch, Y_batch in tqdm(train_loader):

with autograd.record():

preds = model(X_batch) ## Forward pass to make predictions

train_loss = loss_func(preds.squeeze(), Y_batch) ## Calculate Loss

train_loss.backward() ## Calculate Gradients

train_loss = train_loss.mean().asscalar()

losses.append(train_loss)

trainer.step(len(X_batch)) ## Update weights

#if i%5==0:

print("Train CrossEntropyLoss : {:.3f}".format(np.array(losses).mean()))

CalcValLoss(model, val_loader)

Y_actuals, Y_preds = MakePredictions(model, val_loader)

print("Valid Accuracy : {:.3f}".format(accuracy_score(Y_actuals.asnumpy(), Y_preds.asnumpy())))

Here, we are actually training our network using a training routine. We have initialized a number of epochs to 8 and the learning rate to 0.001. Then, we have initialized our text classification network, cross entropy loss, Adam optimizer, and Trainer object. The Trainer object has network parameters (collected by calling collect_params() method on the network) that will be updated during the training process.

Please make a NOTE that we have provided regular expression to collect_params() method. This regular expression will force it to collect parameters of only Dense layers and parameters of Embedding layer will be ignored and hence won't be updated (which is what we want. We don't want to update glove embeddings).

If you are interested in learning about how we can provide regular expressions for different situations to collect_params() method so that it collects and updates parameters (weights/biases) of specific layers of the network only then please check the below link. In our case, we only wanted to update the parameters of dense layers.

We can notice from the loss and accuracy values getting printed after each epoch that our model is doing a good job at the text classification task.

from mxnet import gluon

from mxnet.gluon import loss

from mxnet import autograd

from mxnet import optimizer

epochs=8

learning_rate = 0.001

model = EmbeddingClassifier()

model.initialize()

loss_func = loss.SoftmaxCrossEntropyLoss()

optimizer = optimizer.Adam(learning_rate=learning_rate)

trainer = gluon.Trainer(model.collect_params('dense'), optimizer) ## Training only parameters of dense layers. Ignoring embeding layer.

TrainModelInBatches(trainer, train_loader, test_loader, epochs)

Evaluate Network Performance¶

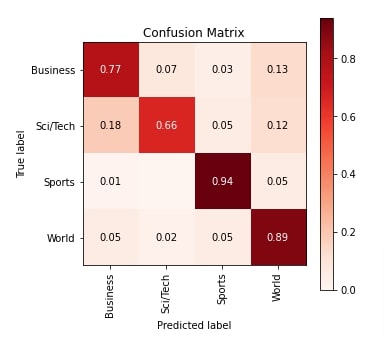

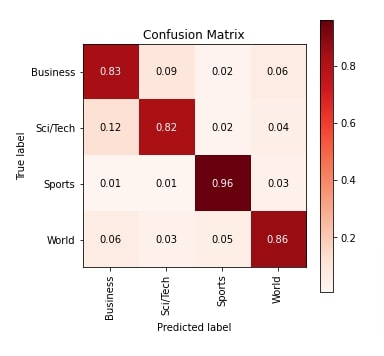

In this section, we have evaluated the performance of our trained network by calculating accuracy score, classification report (precision, recall, and f1-score) and confusion matrix metrics on test predictions. We can notice from the accuracy score that our model has done a good job at the classification task. We have calculated ML metrics using functions available from scikit-learn.

If you are interested in learning about various ML metrics available from sklearn for ML tasks then please check the below link. It covers the majority of them in detail

Apart from calculations, we have also created a visualization for confusion matrix using Python library scikit-plot. We can notice from the confusion matrix that our network is quite good at classifying text documents of categories Sports and World compared to Business and Sci/Tech categories.

The scikit-plot library is designed on top of Python library Matplotlib and provided visualization for many ML metrics. Please feel free to check the below link if you are interested in learning about it in depth.

from sklearn.metrics import accuracy_score, confusion_matrix, classification_report

Y_actuals, Y_preds = MakePredictions(model, test_loader)

print("Test Accuracy : {}".format(accuracy_score(Y_actuals.asnumpy(), Y_preds.asnumpy())))

print("Classification Report : ")

print(classification_report(Y_actuals.asnumpy(), Y_preds.asnumpy(), target_names=target_classes))

print("\nConfusion Matrix : ")

print(confusion_matrix(Y_actuals.asnumpy(), Y_preds.asnumpy()))

from sklearn.metrics import confusion_matrix

import scikitplot as skplt

import matplotlib.pyplot as plt

import numpy as np

skplt.metrics.plot_confusion_matrix([target_classes[i] for i in Y_actuals.asnumpy()], [target_classes[i] for i in Y_preds.asnumpy().astype(int)],

normalize=True,

title="Confusion Matrix",

cmap="Reds",

hide_zeros=True,

figsize=(5,5)

);

plt.xticks(rotation=90);

Explain Predictions using LIME Algorithm¶

In this section, we have explained predictions made by the network using LIME algorithm. It is a commonly used algorithm for explaining predictions of black-box ML models like neural networks. The Python library lime has an implementation of the algorithm. It let us create visualization highlighting words of text that contributed to predicting a particular target label.

If you are someone who is new to the concept of LIME and want to learn about it then we recommend that you go through the below links in your free time. It'll help you understand the concept better.

- How to Use LIME to Understand sklearn Models Predictions?

- LIME: Interpret Predictions Of Keras Text Classification Networks

Below, we have first simply retrieved test text examples and target labels.

X_test, Y_test = [], []

for X, Y in test_dataset:

X_test.append(X)

Y_test.append(Y-1)

Below, we have first created an instance of LimeTextExplainer which will be used to create an explanation object explaining predictions.

Then, we have created a prediction function. This function takes a batch of text examples as input and returns their prediction probabilities generated by the network. It tokenizes text examples, retrieves their indexes, and then gives them to the network to make predictions. The softmax function is applied to the output of the network to turn the output into probabilities.

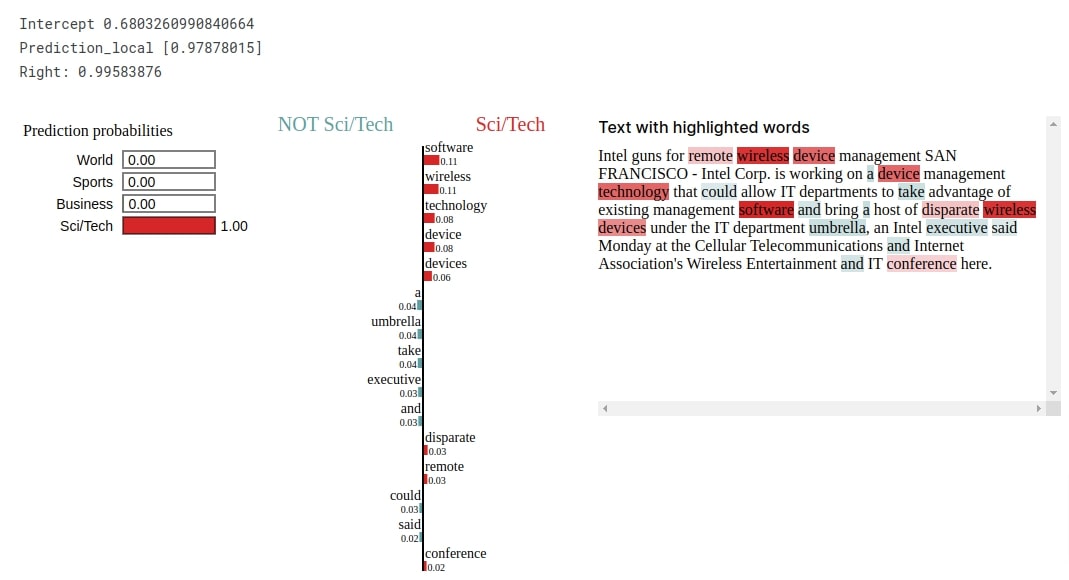

After defining a function, we randomly selected a text example from the test dataset and made predictions on it. Our network correctly predicts the target label as Sci/Tech for the selected text example. Now, we'll explain prediction by creating an explanation object.

from lime import lime_text

explainer = lime_text.LimeTextExplainer(class_names=target_classes, verbose=True)

def make_predictions(X_batch_text):

X_batch = [[vocab(word) for word in tokenizer(sample)] for sample in X_batch_text]

X_batch = [pad_seq(tokens) for tokens in X_batch] ## Bringing all samples to 50 length

logits = model(nd.array(X_batch, dtype=np.int32))

preds = nd.softmax(logits)

return preds.asnumpy()

rng = np.random.RandomState(124)

idx = rng.randint(1, len(X_test))

X_batch = [[vocab(word) for word in tokenizer(sample)] for sample in X_test[idx:idx+1]]

X_batch = [pad_seq(tokens) for tokens in X_batch] ## Bringing all samples to 50 length

preds = model(nd.array(X_batch)).argmax(axis=-1)

print("Actual : ", target_classes[Y_test[idx]])

print("Prediction : ", target_classes[int(preds.asnumpy()[0])])

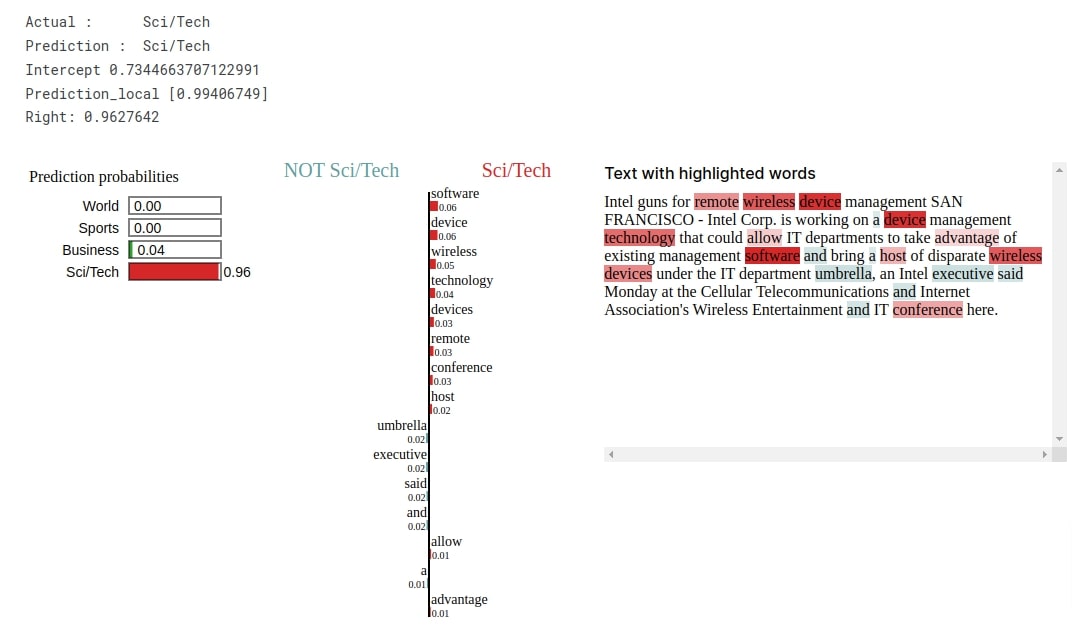

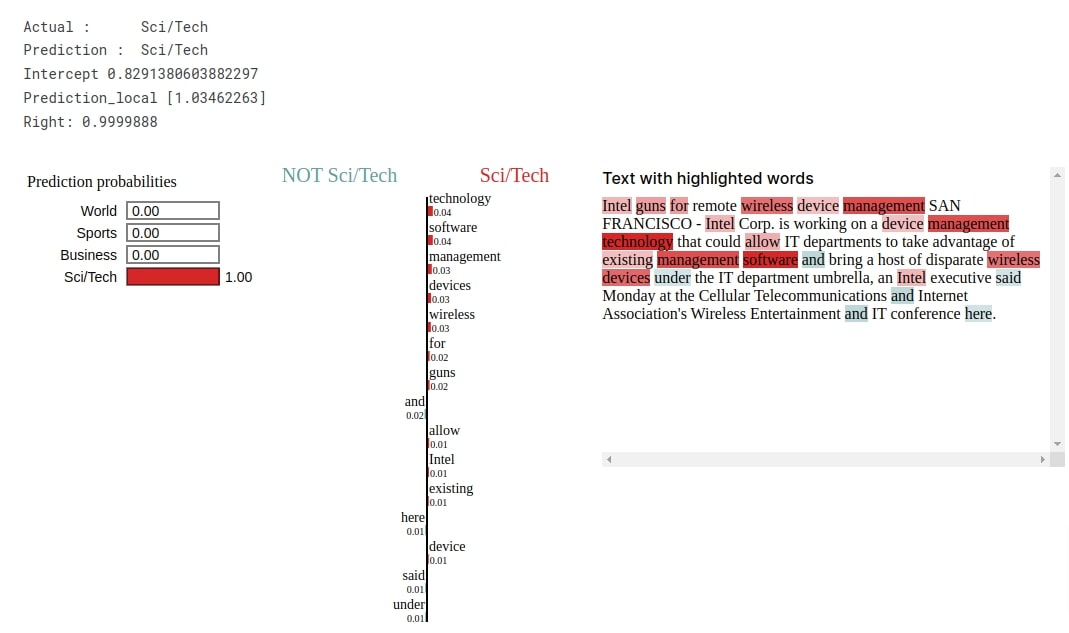

Below, we have first called explain_instance() method on LimeTextExplainer instance to create Explanation object. We have provided a selected text example, prediction function, and target label to the method. Then, we have called show_in_notebook() method on the explanation instance to create the visualization. The visualization shows that words like 'software', 'wireless', 'technology', 'devices', 'remote', 'conference', etc are contributing to predicting target label as Sci/Tech. This makes sense as these are commonly used words in the technology world.

explanation = explainer.explain_instance(X_test[idx], classifier_fn=make_predictions, labels=Y_test[idx:idx+1], num_features=15)

explanation.show_in_notebook()

Approach 2: GloVe '840B' Averaged (Max Tokens=50, Embeddings Length=300) ¶

Our approach in this section has minor changes in the way we handle GloVe embeddings. In this section, we are averaging embeddings that come from the embedding layer instead of flattening it like in the previous section. This is the only change in the architecture of the network. The majority of the code is the same as in our previous section with only a change in network architecture.

Define Network¶

Below, we have defined a network that we'll use for our text classification task in this section. The network has the same layers defined in init() method as in our previous section. The only difference is there in forward() method where we are calling mean() method on the output of the embedding layer to average embeddings at a token level before giving it to the dense layer. The embeddings of all tokens of each example will be averaged. This will change shape of data from (batch_size, max_tokens, embed_len) =(batch_size, 50, 300) to (batch_size, embed_len) = (batch_size, 300). The rest of the dense layers are the same as our previous approach.

As usual, after defining the network, we have initialized it and performed a forward pass to make sure that it works as expected. We have also printed the summary of the layer's output shapes and parameters counts.

from mxnet.gluon import nn

class EmbeddingClassifier(nn.Block):

def __init__(self, **kwargs):

super(EmbeddingClassifier, self).__init__(**kwargs)

self.word_embeddings = nn.Embedding(len(vocab), embed_len) ## Create Embedding Layer

self.word_embeddings.initialize()

self.word_embeddings.weight.set_data(vocab_embeddings) ## Initialize it with GloVe Embeddings

self.dense1 = nn.Dense(128, activation="relu")

self.dense2 = nn.Dense(64, activation="relu")

self.dense3 = nn.Dense(len(target_classes))

def forward(self, x):

x = self.word_embeddings(x)

x = x.mean(axis=1) ## Average Embeddings of All tokens of Single Text Examples

x = self.dense1(x)

x = self.dense2(x)

logits = self.dense3(x)

return logits #nd.softmax(logits)

model = EmbeddingClassifier()

model

from mxnet import init, initializer

model.initialize(initializer.Xavier())

preds = model(nd.random.randint(1,len(vocab), shape=(10,max_tokens)))

preds.shape

model.summary(nd.random.randint(1,len(vocab), shape=(10,max_tokens)))

Train Network¶

Below, we have trained our network using exactly the same settings that we had used for our previous approach. We can notice from the loss and accuracy values getting printed that the network is doing a good job at the classification task.

from mxnet import gluon

from mxnet.gluon import loss

from mxnet import autograd

from mxnet import optimizer

epochs=8

learning_rate = 0.001

model = EmbeddingClassifier()

model.initialize()

loss_func = loss.SoftmaxCrossEntropyLoss()

optimizer = optimizer.Adam(learning_rate=learning_rate)

trainer = gluon.Trainer(model.collect_params('dense'), optimizer) ## Training only parameters of dense layers. Ignoring embeding layer.

TrainModelInBatches(trainer, train_loader, test_loader, epochs)

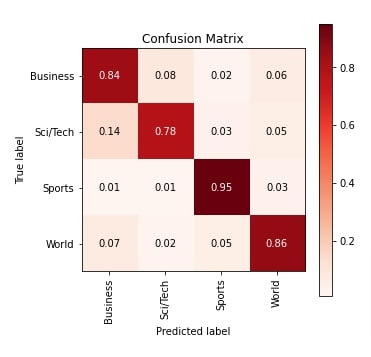

Evaluate Network Performance¶

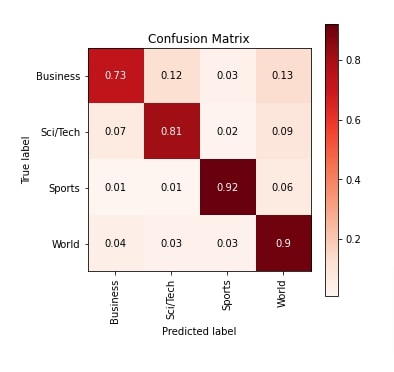

In this section, we have evaluated the performance of our trained network by calculating accuracy score, classification report and confusion matrix metrics on test predictions. We can notice from the accuracy score that there is quite an improvement over the previous approach. We have also plotted the confusion matrix for reference purposes which also indicates improvement in performance.

from sklearn.metrics import accuracy_score, confusion_matrix, classification_report

Y_actuals, Y_preds = MakePredictions(model, test_loader)

print("Test Accuracy : {}".format(accuracy_score(Y_actuals.asnumpy(), Y_preds.asnumpy())))

print("Classification Report : ")

print(classification_report(Y_actuals.asnumpy(), Y_preds.asnumpy(), target_names=target_classes))

print("\nConfusion Matrix : ")

print(confusion_matrix(Y_actuals.asnumpy(), Y_preds.asnumpy()))

from sklearn.metrics import confusion_matrix

import scikitplot as skplt

import matplotlib.pyplot as plt

import numpy as np

skplt.metrics.plot_confusion_matrix([target_classes[i] for i in Y_actuals.asnumpy()], [target_classes[i] for i in Y_preds.asnumpy().astype(int)],

normalize=True,

title="Confusion Matrix",

cmap="Reds",

hide_zeros=True,

figsize=(5,5)

);

plt.xticks(rotation=90);

Explain Network Predictions using LIME Algorithm¶

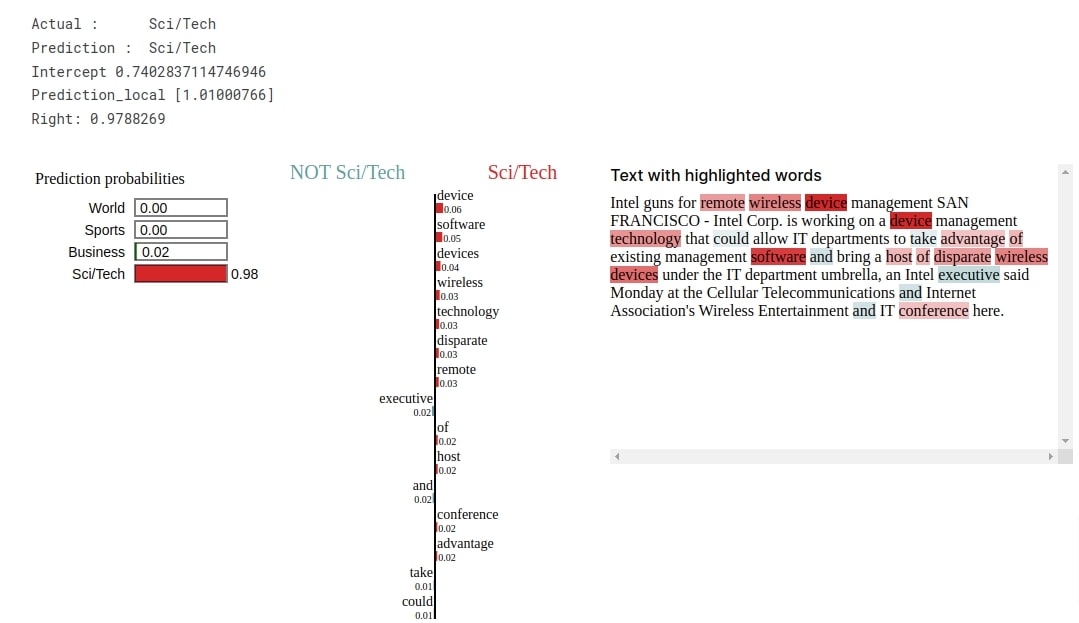

In this section, we have explained predictions made by the network using LIME algorithm. Our network correctly predicts the target label as Sci/Tech for randomly selected text example from the test dataset. The visualization shows that words like 'software', 'device', 'wireless', 'technology', 'remote', 'conference', 'host', etc are contributing to predicting target label as Sci/Tech.

from lime import lime_text

explainer = lime_text.LimeTextExplainer(class_names=target_classes, verbose=True)

rng = np.random.RandomState(124)

idx = rng.randint(1, len(X_test))

X_batch = [[vocab(word) for word in tokenizer(sample)] for sample in X_test[idx:idx+1]]

X_batch = [pad_seq(tokens) for tokens in X_batch] ## Bringing all samples to 50 length

preds = model(nd.array(X_batch)).argmax(axis=-1)

print("Actual : ", target_classes[Y_test[idx]])

print("Prediction : ", target_classes[int(preds.asnumpy()[0])])

explanation = explainer.explain_instance(X_test[idx], classifier_fn=make_predictions, labels=Y_test[idx:idx+1], num_features=15)

explanation.show_in_notebook()

Approach 3: GloVe '840B' Summed (Max Tokens=50, Embeddings Length=300) ¶

Our approach in this section uses the same architecture as our previous approach with a minor change. In this section, we have summed the embeddings of tokens instead of averaging them. The majority of the code is the same as in the previous section with the only difference in network architecture.

Define Network¶

Below, we have defined a network that we'll use for our text classification task in this section. The network has exactly the same architecture as our previous example with the only change that we are calling sum() function on the output of the embedding layer. All other are laid out exactly the same as earlier.

After defining the network, we initialized it and performed a forward pass to make predictions for verification purposes. We have also printed the summary of layer output size and parameters counts.

from mxnet.gluon import nn

class EmbeddingClassifier(nn.Block):

def __init__(self, **kwargs):

super(EmbeddingClassifier, self).__init__(**kwargs)

self.word_embeddings = nn.Embedding(len(vocab), embed_len) ## Create Embedding Layer

self.word_embeddings.initialize()

self.word_embeddings.weight.set_data(vocab_embeddings) ## Initialize it with GloVe Embeddings

self.dense1 = nn.Dense(128, activation="relu")

self.dense2 = nn.Dense(64, activation="relu")

self.dense3 = nn.Dense(len(target_classes))

def forward(self, x):

x = self.word_embeddings(x)

x = x.sum(axis=1) ## Sum Embeddings of All tokens of Single Text Examples

x = self.dense1(x)

x = self.dense2(x)

logits = self.dense3(x)

return logits #nd.softmax(logits)

model = EmbeddingClassifier()

model

from mxnet import init, initializer

model.initialize(initializer.Xavier())

preds = model(nd.random.randint(1,len(vocab), shape=(10,max_tokens)))

preds.shape

model.summary(nd.random.randint(1,len(vocab), shape=(10,max_tokens)))

Train Network¶

In this section, we have trained our network using exactly the same settings that we have been using for all our approaches. We can notice from the loss and accuracy values getting printed after each epoch that our network is doing a good job.

from mxnet import gluon

from mxnet.gluon import loss

from mxnet import autograd

from mxnet import optimizer

epochs=8

learning_rate = 0.001

model = EmbeddingClassifier()

model.initialize()

loss_func = loss.SoftmaxCrossEntropyLoss()

optimizer = optimizer.Adam(learning_rate=learning_rate)

trainer = gluon.Trainer(model.collect_params('dense'), optimizer) ## Training only parameters of dense layers. Ignoring embeding layer.

TrainModelInBatches(trainer, train_loader, test_loader, epochs)

Evaluate Network Performance¶

In this section, we have evaluated the performance of our trained network by calculating accuracy score, classification report and confusion matrix metrics on test predictions. We can notice from the accuracy score that it is a little better compared to our previous approach. We have also plotted the confusion matrix for reference purposes which shows that almost all categories are doing better now.

from sklearn.metrics import accuracy_score, confusion_matrix, classification_report

Y_actuals, Y_preds = MakePredictions(model, test_loader)

print("Test Accuracy : {}".format(accuracy_score(Y_actuals.asnumpy(), Y_preds.asnumpy())))

print("Classification Report : ")

print(classification_report(Y_actuals.asnumpy(), Y_preds.asnumpy(), target_names=target_classes))

print("\nConfusion Matrix : ")

print(confusion_matrix(Y_actuals.asnumpy(), Y_preds.asnumpy()))

from sklearn.metrics import confusion_matrix

import scikitplot as skplt

import matplotlib.pyplot as plt

import numpy as np

skplt.metrics.plot_confusion_matrix([target_classes[i] for i in Y_actuals.asnumpy()], [target_classes[i] for i in Y_preds.asnumpy().astype(int)],

normalize=True,

title="Confusion Matrix",

cmap="Reds",

hide_zeros=True,

figsize=(5,5)

);

plt.xticks(rotation=90);

Explain Network Predictions using LIME Algorithm¶

In this section, we have explained the predictions using LIME algorithm. Our network correctly predicts the target label as Sci/Tech for the selected text example from the test dataset. The visualization highlights that words like 'device', 'software', 'wireless', 'technology', 'disparate', 'remote', 'host', 'conference', etc are contributing to predicting target label as Sci/Tech.

from lime import lime_text

explainer = lime_text.LimeTextExplainer(class_names=target_classes, verbose=True)

rng = np.random.RandomState(124)

idx = rng.randint(1, len(X_test))

X_batch = [[vocab(word) for word in tokenizer(sample)] for sample in X_test[idx:idx+1]]

X_batch = [pad_seq(tokens) for tokens in X_batch] ## Bringing all samples to 50 length

preds = model(nd.array(X_batch)).argmax(axis=-1)

print("Actual : ", target_classes[Y_test[idx]])

print("Prediction : ", target_classes[int(preds.asnumpy()[0])])

explanation = explainer.explain_instance(X_test[idx], classifier_fn=make_predictions, labels=Y_test[idx:idx+1], num_features=15)

explanation.show_in_notebook()

Approach 4: GloVe '42B' Flattened (Max Tokens=50, Embeddings Length=300) ¶

Our approach in this section is exactly the same as our approach in the first section with the only difference being that we are using GloVe 42B.300d embeddings in this section. It uses the same network architecture that flattens embeddings as in our first approach. We'll see whether this embedding helps us improve performance over the first. The GloVe 42B.300d has embeddings for 1.9 million tokens and embeddings size is 300.

Load Glove 42B Embeddings¶

In this section, we have simply loaded GloVe 42B.300d embedding using GloVe() constructor available from gluonnlp Python library.

glove_embeddings = gluonnlp.embedding.GloVe(source='glove.42B.300d')

glove_embeddings

Create Embeddings Matrix of Vocab Tokens using Glove Embeddings¶

In this section, we have created an embedding matrix using tokens of our vocabulary. The embeddings for tokens of our vocabulary are retrieved from GloVe embeddings. This matrix will become the weight matrix of the embedding layer later on.

%%time

vocab_embeddings = nd.zeros((len(vocab), embed_len), dtype=np.float32)

for i, token in enumerate(vocab.idx_to_token):

vocab_embeddings[i] = glove_embeddings[token]

vocab_embeddings[5][:10]

Define Network¶

In this section, we have defined a network that we'll use for our classification task in this section. The network architecture is exactly the same as our first approach with the only difference being that the weight matrix of the embedding layer now consists of GloVe 42B.300d embeddings.

from mxnet.gluon import nn

class EmbeddingClassifier(nn.Block):

def __init__(self, **kwargs):

super(EmbeddingClassifier, self).__init__(**kwargs)

embed_layer = nn.Embedding(len(vocab), embed_len) ## Create Embedding Layer

embed_layer.initialize()

embed_layer.weight.set_data(vocab_embeddings) ## Initialize it with GloVe Embeddings

self.seq = nn.Sequential()

self.seq.add(embed_layer)

self.seq.add(nn.Flatten()) ### Flatten Embeddings

self.seq.add(nn.Dense(128, activation="relu"))

self.seq.add(nn.Dense(64, activation="relu"))

self.seq.add(nn.Dense(len(target_classes)))

def forward(self, x):

logits = self.seq(x)

return logits #nd.softmax(logits)

model = EmbeddingClassifier()

model

Train Network¶

Below, we have trained our network using exactly the same settings that we have been using for all our approaches. We can notice from the loss and accuracy scores that our network is doing a good job at the classification task.

from mxnet import gluon

from mxnet.gluon import loss

from mxnet import autograd

from mxnet import optimizer

epochs=8

learning_rate = 0.001

model = EmbeddingClassifier()

model.initialize()

loss_func = loss.SoftmaxCrossEntropyLoss()

optimizer = optimizer.Adam(learning_rate=learning_rate)

trainer = gluon.Trainer(model.collect_params(), optimizer)

TrainModelInBatches(trainer, train_loader, test_loader, epochs)

Evaluate Network Performance¶

In this section, we have evaluated the performance of our network by calculating accuracy score, classification report and confusion matrix metrics on test predictions. We can notice from the accuracy score that it is better compared to our first approach but a little less compared to the other two approaches. We have also plotted the confusion matrix for reference purposes.

from sklearn.metrics import accuracy_score, confusion_matrix, classification_report

Y_actuals, Y_preds = MakePredictions(model, test_loader)

print("Test Accuracy : {}".format(accuracy_score(Y_actuals.asnumpy(), Y_preds.asnumpy())))

print("Classification Report : ")

print(classification_report(Y_actuals.asnumpy(), Y_preds.asnumpy(), target_names=target_classes))

print("\nConfusion Matrix : ")

print(confusion_matrix(Y_actuals.asnumpy(), Y_preds.asnumpy()))

from sklearn.metrics import confusion_matrix

import scikitplot as skplt

import matplotlib.pyplot as plt

import numpy as np

skplt.metrics.plot_confusion_matrix([target_classes[i] for i in Y_actuals.asnumpy()], [target_classes[i] for i in Y_preds.asnumpy().astype(int)],

normalize=True,

title="Confusion Matrix",

cmap="Reds",

hide_zeros=True,

figsize=(5,5)

);

plt.xticks(rotation=90);

Explain Network Predictions using LIME Algorithm¶

In this section, we have explained predictions made by the network using LIME algorithm. The network correctly predicts the target label as Sci/Tech for randomly selected text example from the test dataset. The visualization shows that words like 'technology', 'software', 'management', 'devices', 'wireless', 'guns', 'intel', etc are contributing to predicting target category as Sci/Tech.

from lime import lime_text

explainer = lime_text.LimeTextExplainer(class_names=target_classes, verbose=True)

rng = np.random.RandomState(124)

idx = rng.randint(1, len(X_test))

X_batch = [[vocab(word) for word in tokenizer(sample)] for sample in X_test[idx:idx+1]]

X_batch = [pad_seq(tokens) for tokens in X_batch] ## Bringing all samples to 50 length

preds = model(nd.array(X_batch)).argmax(axis=-1)

print("Actual : ", target_classes[Y_test[idx]])

print("Prediction : ", target_classes[int(preds.asnumpy()[0])])

explanation = explainer.explain_instance(X_test[idx], classifier_fn=make_predictions, labels=Y_test[idx:idx+1], num_features=15)

explanation.show_in_notebook()

6. Results Summary and Further Recommendations ¶

| Approach | Max Tokens | Embedding Length | Test Accuracy (%) |

|---|---|---|---|

| GloVe '840B' Flattened | 50 | 300 | 81.17 |

| GloVe '840B' Averaged | 50 | 300 | 85.57 |

| GloVe '840B' Summed | 50 | 300 | 86.60 |

| GloVe '42B' Flattened | 50 | 300 | 84.03 |

Further Suggestions¶

- Try different tokens per text example.

- Try different functions on embeddings other than average and sum.

- Train network for more epochs.

- Try other GloVe embeddings like 42B, 27B, 6B, etc.

- Add more dense layers with different output units.

- Try different weight initializers.

- Try different optimizers.

- Try different activation functions.

- Try learning rate schedulers

Sunny Solanki

Sunny Solanki

![YouTube Subscribe]() Comfortable Learning through Video Tutorials?

Comfortable Learning through Video Tutorials?

If you are more comfortable learning through video tutorials then we would recommend that you subscribe to our YouTube channel.

![Need Help]() Stuck Somewhere? Need Help with Coding? Have Doubts About the Topic/Code?

Stuck Somewhere? Need Help with Coding? Have Doubts About the Topic/Code?

When going through coding examples, it's quite common to have doubts and errors.

If you have doubts about some code examples or are stuck somewhere when trying our code, send us an email at coderzcolumn07@gmail.com. We'll help you or point you in the direction where you can find a solution to your problem.

You can even send us a mail if you are trying something new and need guidance regarding coding. We'll try to respond as soon as possible.

![Share Views]() Want to Share Your Views? Have Any Suggestions?

Want to Share Your Views? Have Any Suggestions?

If you want to

- provide some suggestions on topic

- share your views

- include some details in tutorial

- suggest some new topics on which we should create tutorials/blogs

Comfortable Learning through Video Tutorials?

Comfortable Learning through Video Tutorials? Stuck Somewhere? Need Help with Coding? Have Doubts About the Topic/Code?

Stuck Somewhere? Need Help with Coding? Have Doubts About the Topic/Code? Want to Share Your Views? Have Any Suggestions?

Want to Share Your Views? Have Any Suggestions? mxnet, glove-embeddings, text-classification

mxnet, glove-embeddings, text-classification