.jpg)

Generative Adversarial Networks (GANs)¶

Generative adversarial networks are a class of deep learning algorithms invented by Ian Goodfellow in 2014. Prominent machine learning researcher Yann LeCun also described GANs as the coolest idea in machine learning in the last 20 years. After its invention, there have been many different variants of the same idea (like Deep Convolutional GAN, Bidirectional GAN, Conditional GANs, Stack GAN, Info GAN, Discover Cross-Domain Relations with GANs, Style GAN, etc) were proposed as well. We'll be discussing the core idea behind GANs and it's applications in this article.

How GAN Works (Architectural Overview)¶

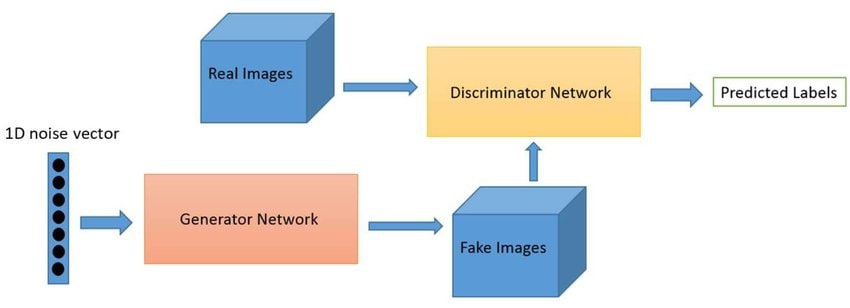

Generative Adversarial Networks are a type of generative model of deep learning which is based on the adversarial process for training neural networks involved in the training. GANs consist of two kinds of neural networks that work with each other in the adversarial process.

- Generative Neural Network (D).

- Discriminative Neural Network (G).

Both of these networks are trained simultaneously.

Generative Model¶

A generative neural network is responsible for generating new data from the distribution of training data in a way that discriminator can not differentiate that it has not come from training data. Generative Model is trained in a way that it's able to capture the distribution of training data and generate new data which looks like it has come from the distribution of training data hence fooling the discriminative model. Generative networks take as input random data and convert it to data coming from the distribution of training data. It's generally designed as a multi-layer perceptron or deconvolutional neural network.

Discriminative Model¶

A discriminative neural network is responsible for predicting whether output generated by the generator has actually come from the distribution of training data or not. It outputs the probability of sample generated by generator telling whether it belongs to the same distribution as training or not. Discriminator takes as output generated by the generator and tells whether it has come from training data or not. It's trained with original training samples as well so that it can better understand the difference between training samples. It's generally designed as a multi-layer perceptron or convolutional neural network.

Training Process¶

The training process follows a framework that corresponds to the min-max two-player game. In this game discriminator tries it best to discriminate the image generated by generator from actual training sample and generator tries best to fool discriminator into believing that image indeed has come from training sample. Training stops when discriminator predicts a probability of 0.5 for each output generated by the generator. We can say that we have reached a stage where the generator has almost captured the distribution of training samples and can independently convert random data to look like the sample has come from training data. Both discriminator and generator can be declared multilayer perceptron and the whole system can be trained using backpropagation. The training process for both generator and discriminator happens simultaneously. We train discriminator during each epoch of total data to get maximum accuracy of predicting correct label whereas generator is trained in parallel to minimize its loss function by generating as accurate image as possible which can confuse discriminator in mislabeling it.

Application of GAN¶

- Increasing Image Resolution (Super resolution)

- Applying various styles/themes to images

- Create Imagery photos of non-existing fashion models

- GANS can be used to create imagenary portrait, wallpapers, etc.

- DeOldify/Image Colorization.

- Deepfaking image/video (Quite Risky Application with consequences)

- Some Cool GAN Projects

GAN Variants¶

We'll now discuss few GAN variants in short which have become famous over time.

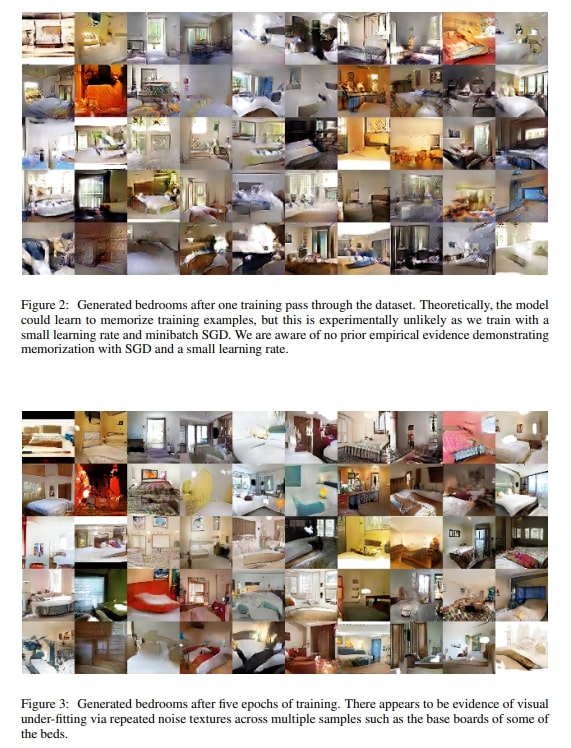

1. Deep Convolutional GANs (DCGANs)¶

DCGANs are improved version of GANs which generate nicer images than normal GAN trained with multilayer perceptrons.DCGAN uses deconvolution layers in the design of the generator and convolution layers in the design of discriminator. They also can be used for style/theme transfer.

2. Conditional GANs (cGANs)¶

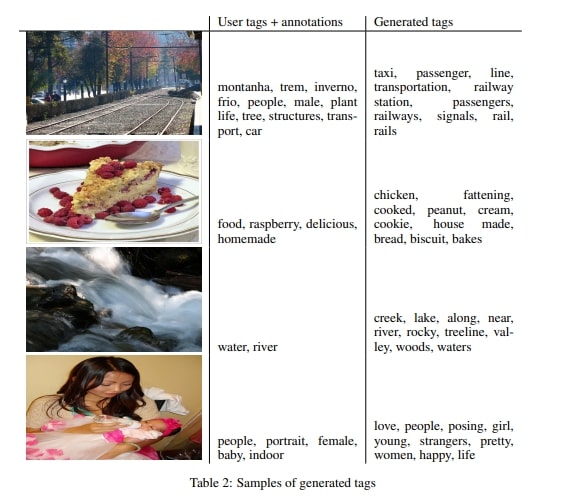

Conditional GANs use extra-label information passed to it to better generate images. Both generator and discriminator are conditioned on these extra given labels.

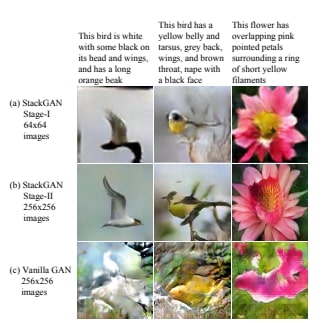

3. Stack GAN¶

Stack GAN authors proposed a solution to generating high-quality images from text descriptions about these images. In stack GAN, models are conditioned on text description to generate images as text description as given as extra information.

It consists of a two-stage process where the first stage sketches primitive objects based on the text description and during the second stage it takes that primitive low-resolution image as well as text description as input and generates high-resolution images with realistic details.

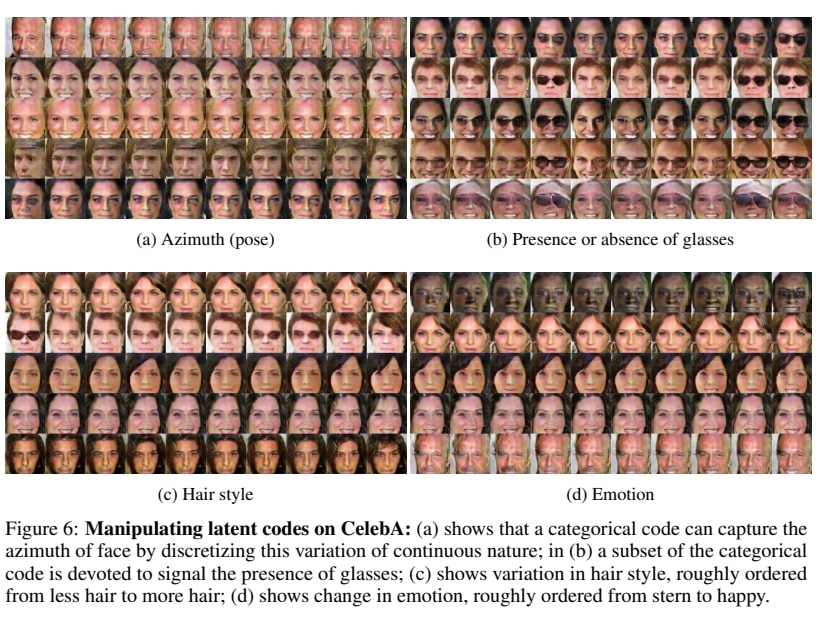

4. InfoGANs¶

InfoGANs can learn disentangled representations of data. InfoGAN was successful in disentangling writing styles from digit shapes on MNIST and pose from 3D images. It can also discover the presence/absence of glasses, hairstyles and emotions on CelebA face dataset.

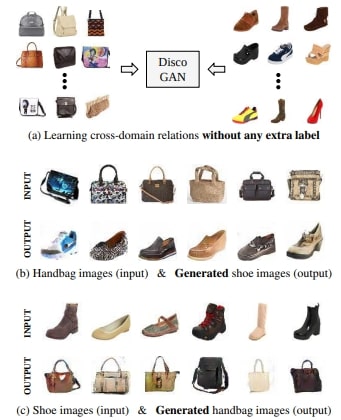

5. Discover Cross-Domain Relations with GANs (Disco GANs)¶

Disco GANs recognize relations between data from different domains and then can style transfer it to different objects. It basically learns the style of one object and can then apply the same style/theme to another object.

There are still many more types of GANs. We have covered above just a few famous of them.

Advantages of GAN¶

- GANs are an unsupervised learning method and can be trained with data without labels.

- GANs can generate data that are very similar to real data.

- GANs learn the distribution of data.

- GANS don't need monte Carlo approximation for training like Boltzmann machines which rely on Monte Carlo approximations.

Disadvantages of GAN¶

- Generator should not be over-trained to avoid "the Helvetica Scenario" where it generates samples that look exactly like the training sample and not something new from its distribution.

- Super-resolution GANs do not learn actual truth of images/videos when increasing resolution instead it makes guesses based on training. This is the reason it might not be ideal for some applications where we need to recapture truth which might not be possible with GANs.

- Training GANs is difficult as it's hard to find the right amount of training required for generator to prevent it from overfitting and start generating training samples.

- It generates "realistic" results in case of increasing resolution of image/video but "not real" results as it makes guess and can create fake patterns.

References¶

Below are some references which were referred to while creating the above article. All images were taken from research papers itself.

Sunny Solanki

Sunny Solanki

![]() Want to Share Your Views? Have Any Suggestions?

Want to Share Your Views? Have Any Suggestions?

If you want to

- provide some suggestions on topic

- share your views

- include some details in tutorial

- suggest some new topics on which we should create tutorials/blogs

Want to Share Your Views? Have Any Suggestions?

Want to Share Your Views? Have Any Suggestions? GAN, generative-models

GAN, generative-models